Vectara is an enterprise agent platform that combines retrieval-augmented generation (RAG) with always-on governance, actively detecting and correcting hallucinations at runtime rather than just flagging them. It is listed in the AI security category.

Founded by former Google AI researchers, Vectara raised $25M in Series A funding in 2024 led by FPV Ventures and Race Capital, bringing total funding to $53.5M. The company positions itself as a platform for regulated industries where AI accuracy and auditability are non-negotiable.

Vectara’s differentiator is treating governance as a core platform feature rather than a bolt-on. Hallucination detection, policy enforcement, and source attribution run continuously as part of the RAG pipeline, not as optional post-processing steps.

What is Vectara?

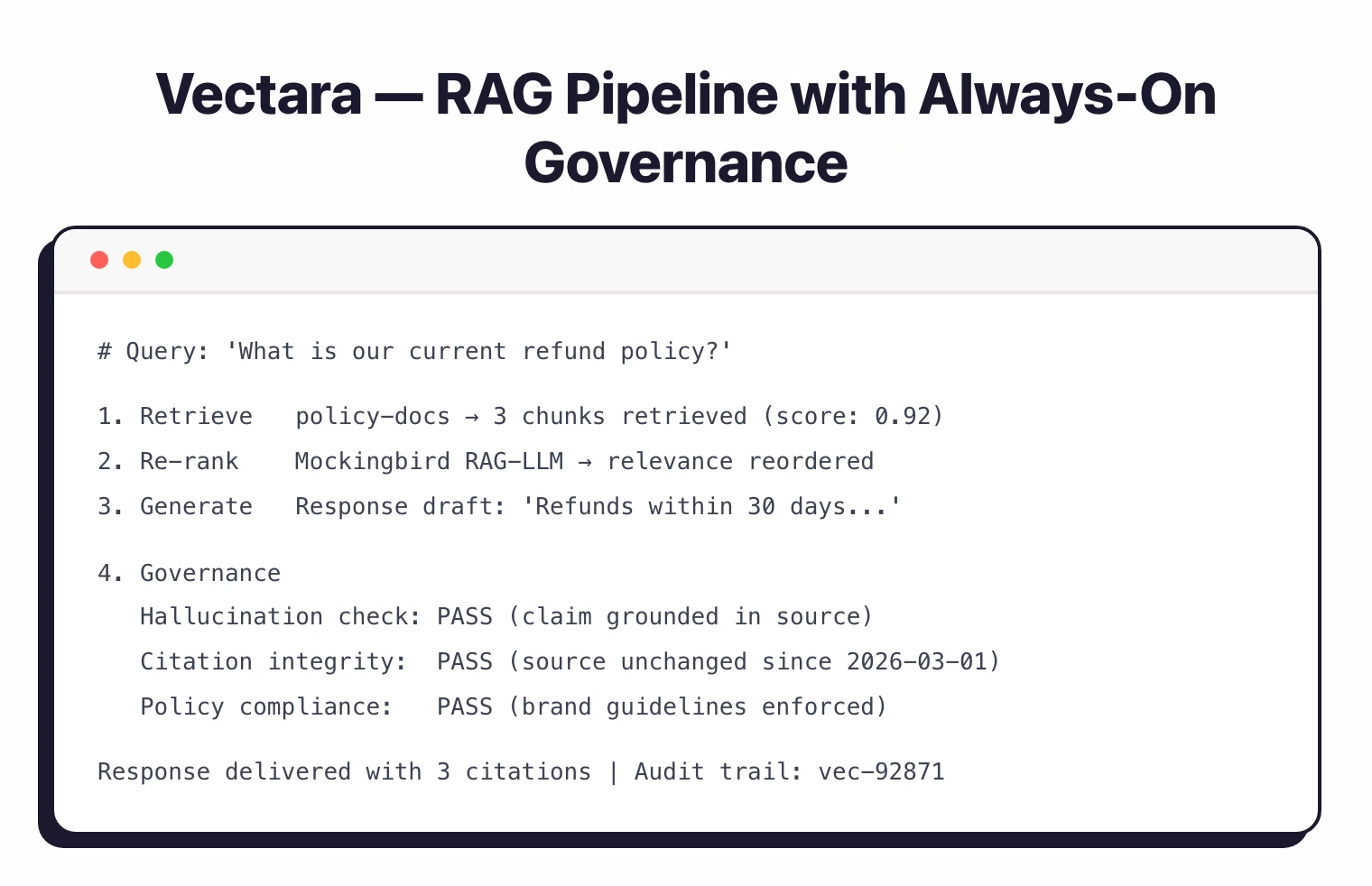

Vectara provides a full platform for building enterprise AI agents that retrieve information from organizational data and generate grounded responses. It handles the entire RAG pipeline: ingestion, indexing, retrieval, re-ranking, and response generation with governance controls at each stage.

The key architectural decision is always-on governance. Instead of relying on external guardrail tools to catch problems after generation, Vectara embeds hallucination detection, policy enforcement, and citation tracking directly into the generation pipeline. When it detects that a response strays from source material, it corrects the output before delivery.

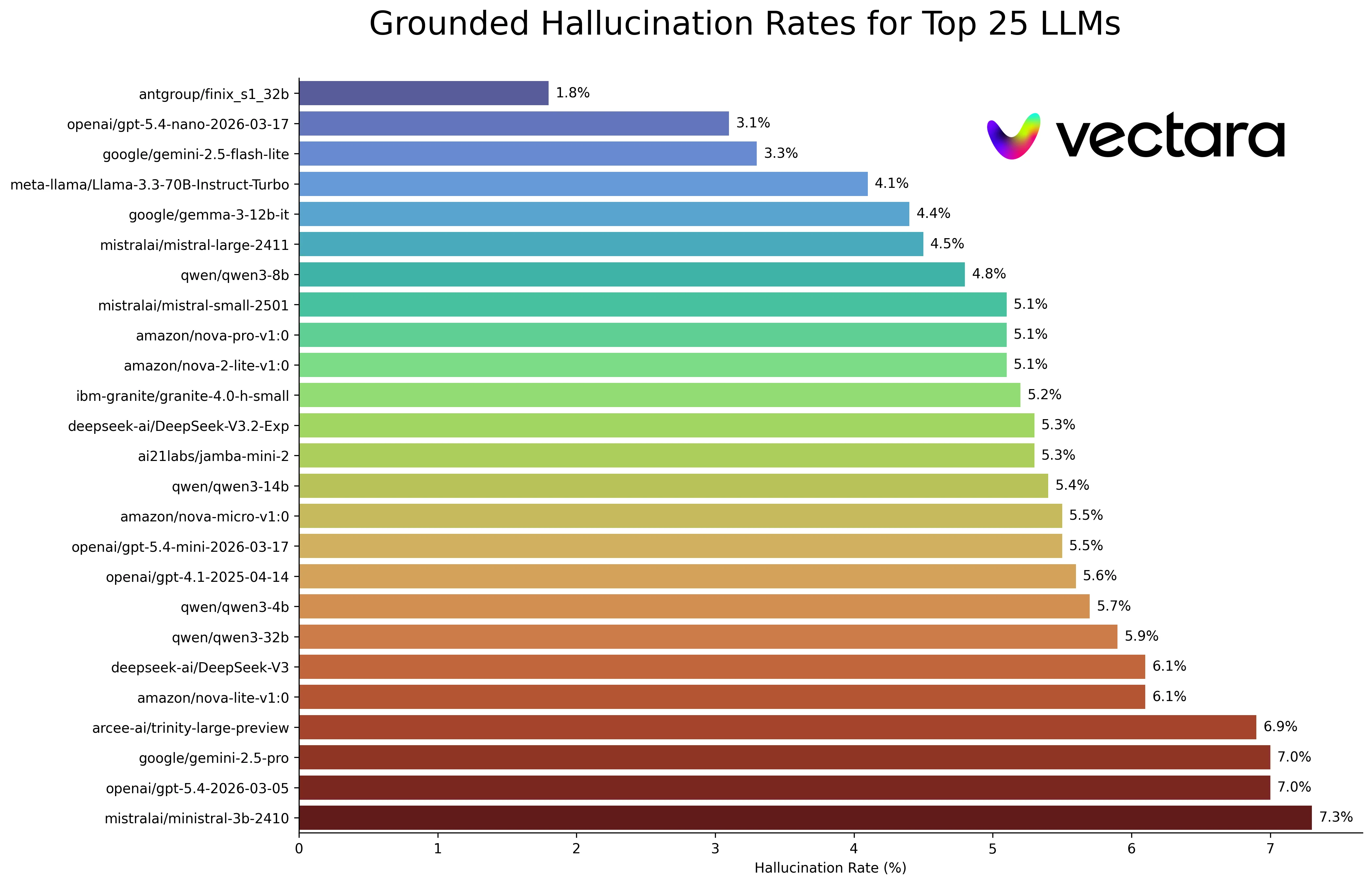

The Mockingbird LLM, launched alongside the Series A funding, is purpose-built for RAG applications. According to Vectara, Mockingbird outperforms GPT-4 and Google Gemini-1.5-Pro on the Bert-F1 benchmark, which measures how accurately RAG models transform retrieved data into prompt responses.

Key Features

| Feature | Details |

|---|---|

| RAG Pipeline | End-to-end: ingestion, indexing, retrieval, re-ranking, generation |

| Hallucination Detection | Real-time detection and correction during generation |

| Mockingbird LLM | Purpose-built for RAG; outperforms GPT-4 on Bert-F1 benchmark |

| Source Citations | Every response includes source attribution with change detection |

| Policy Controls | Brand, factual-consistency, and compliance rules enforced at runtime |

| Access Controls | Role-based permissions for data, agents, and administrative functions |

| Multimodal Support | Text, tables, and image retrieval and processing |

| Model Flexibility | BYOM (Bring Your Own Model) — works with any LLM provider |

| Deployment | SaaS, customer VPC, on-premises (air-gapped) |

| Compliance | SOC-2 Type 2 certified, HIPAA compliant |

| Audit Trails | Step-level tracking for compliance and debugging |

Hallucination detection and correction

Vectara’s governance layer doesn’t just flag potential hallucinations — it corrects them. The platform compares generated responses against retrieved source material, checking for claims that aren’t supported by the evidence. When it finds inconsistencies, it adjusts the response before it reaches the user.

This is different from tools that run hallucination checks after generation is complete. By integrating detection into the generation pipeline itself, Vectara prevents hallucinated content from being served rather than catching it after the fact.

Citation integrity

Every Vectara response includes source citations pointing back to the original documents or data that informed the answer. The platform tracks citation integrity over time, detecting when source documents change and flagging responses that may need updating based on modified source material.

For regulated industries, this creates an auditable chain from any AI-generated response back to its factual basis.

Air-gapped deployment

The on-premises deployment option is for organizations with strict data sovereignty requirements. In air-gapped mode, no data — queries, documents, or responses — leaves the customer’s data center. This removes a major barrier to AI adoption in defense, government, and highly regulated financial services environments.

Getting Started

When to use Vectara

Vectara fits organizations building enterprise AI agents that must be accurate, auditable, and compliant. The always-on governance model matters most in regulated industries — healthcare, financial services, legal, government — where a hallucinated response could have regulatory or safety consequences.

It is also worth looking at if your team has tried building RAG from scratch and found that assembling vector databases, retrieval logic, re-ranking, and safety layers into a reliable production system takes longer than expected. Vectara packages all of that with governance built in.

The on-premises deployment option addresses a specific market: organizations that want to use AI agents but cannot allow their data to leave their infrastructure under any circumstances.

For a broader overview of AI security tools, see the AI security tools guide. For input/output guardrails rather than full RAG governance, consider Guardrails AI or NeMo Guardrails.

For AI data privacy and PII masking in AI pipelines, see Protecto. For evaluation intelligence and AI observability, look at Galileo AI.