Best SAST Tools 2026: All 34 Static Analysis Tools Compared

Independent ranking — no vendor pays to appear here. See methodology.

Independent comparison of 30+ static application security testing tools — enterprise, developer-first, and language-specific picks with CI/CD notes.

At a glance

The best SAST tools in 2026: Semgrep CE, Snyk Code, Checkmarx One, Veracode, and CodeQL.

- Best free SAST scanner: Semgrep CE — 30+ languages, custom YAML rules, GitHub Actions + GitLab CI integration in minutes

- Best developer experience: Snyk Code — real-time IDE feedback with AI-generated fix suggestions

- Best enterprise platform: Checkmarx One — deep cross-file taint analysis across 35+ languages with PCI DSS / SOC 2 / HIPAA dashboards

- Best for legacy + binary codebases: Veracode — 100+ languages and frameworks including COBOL and Visual Basic 6

- Best for GitHub-native teams: CodeQL — semantic analysis, free for public repositories

I evaluated 30+ static application security testing scanners using publicly verifiable evidence — vendor docs, GitHub release history, language coverage, and community-reported false-positive rates. No vendor paid to appear on this page.

**SAST tools are static application security testing scanners that analyze source code, bytecode, or compiled binaries for security vulnerabilities before the application runs.

** They catch issues like SQL injection, cross-site scripting, and buffer overflows during development, when the cost to fix is a small fraction of what it costs after deployment.

SAST is one layer of the broader application security discipline, and this page tracks every actively maintained SAST tool across two license tiers — free open-source and commercial — comparing them by language support, CI/CD integration, false-positive rate, and cost.

Do You Actually Need a SAST Tool?

If your team ships code to production, the answer is yes — but the next question is whether you need it tomorrow or next quarter.

SAST catches code-level bugs — SQL injection, cross-site scripting, hardcoded secrets, insecure deserialization — at the file and line where the bug lives. Catching the same bug in production costs roughly 10x more.

The honest version of “do I need this”:

- You ship code without security review. Start with a free scanner today. Semgrep CE and Bandit install in minutes and catch the obvious stuff.

- You have compliance obligations. PCI DSS 4.0.1 (Requirement 6.2.4), SOC 2 (CC7.1 and CC8.1), HIPAA (45 CFR 164.308(a)(1)(ii)(A)), and ISO 27001:2022 (Annex A.8.27) all expect a documented secure-development process — and NIST SP 800-218 SSDF practice PW.7 explicitly maps to static analysis. SAST is not literally named in every clause, but auditors look for evidence of code-level vulnerability management. Without it, you are explaining yourself.

- You already run DAST or have a security team. SAST does not replace either. It catches code-flow flaws DAST cannot reach (because no traffic hits that path) and filters out the obvious bugs before security review sees them.

- You evaluate vendors, not code. Skip to Quick Comparison . The buyer-mode shortlist is what you need.

If your team writes code that handles user input, money, PII, or auth — and “writes code” covers nearly every team in 2026 — you are already paying for missing SAST. The question is whether the cost shows up in the SDLC or in the incident channel.

The 5 Best SAST Tools in 2026

The best SAST tools in 2026 are Semgrep CE, Snyk Code, Checkmarx One, Veracode, and CodeQL. Semgrep CE is the best free option — it covers 30+ languages, supports custom YAML rules, and integrates natively into GitHub Actions and GitLab CI in minutes.

Snyk Code leads on developer experience with real-time IDE feedback and AI-generated fix suggestions. Checkmarx One is the strongest enterprise pick with deep taint analysis across 35+ languages and compliance dashboards for PCI DSS, SOC 2, and HIPAA.

Veracode covers 100+ languages and frameworks including legacy stacks like COBOL and Visual Basic 6 — the right choice when you need to audit third-party binaries without source access.

CodeQL is free for public GitHub repositories and delivers semantic analysis that finds vulnerabilities generic pattern matchers miss. No vendor pays to appear here — rankings are based on publicly verifiable evidence.

The five best SAST tools in 2026, picked by buyer profile, are Semgrep CE, Snyk Code, Checkmarx One, Veracode, and CodeQL:

- Semgrep CE — best free multi-language scanner with custom YAML rules

- Snyk Code — best developer experience with IDE-first workflows and AI fix suggestions

- Checkmarx One — best enterprise SAST with deep compliance reporting across 35+ languages

- Veracode — best for legacy and binary-only codebases (100+ languages and frameworks)

- CodeQL — best for GitHub-native teams (free for public repositories)

The right pick depends on your stack, codebase size, and compliance scope. Compare all 34 tools side by side in the Quick Comparison table below.

Pick your next step

I want the open-source-only list

Free SAST scanners ranked — Semgrep CE, Bandit, gosec, CodeQL, OpenGrep — with detection benchmarks and language coverage tables.

→I want SAST in context

SAST vs DAST vs IAST head-to-head — which vulnerabilities each one catches, where they overlap, and which two to run together.

→I'm drowning in false positives

Concrete tactics to cut SAST noise — baseline management, custom rules, suppressions, and tool-specific tuning across Semgrep, CodeQL, and Snyk Code.

→Quick Comparison

I track 34 SAST tools across three license tiers: 15 free open-source, 1 freemium, and 18 commercial.

The SAST market in 2026 spans a broad spectrum from Semgrep CE and Bandit at the CI/CD-native end to enterprise platforms like Checkmarx One and Veracode with compliance dashboards, ASPM correlation, and 35+ language support.

Teams looking for zero-license scanners should see the dedicated open source SAST tools roundup .

The table below groups them by license type so you can narrow down your shortlist quickly.

For full reviews, see each tool’s page on our mega comparison .

Dedicated OSS roundup: See the open source SAST tools guide for detection-quality benchmarks and language coverage tables.

| Tool | License | Languages | Standout |

|---|---|---|---|

| Free / Open Source (15) | |||

| Bandit | Free (OSS) | Python | Python-specific security checks |

| Betterleaks | Free (OSS) | Secrets (multi-language) | Gitleaks successor with live secret validation via CEL |

| Brakeman | Free (OSS) | Ruby on Rails | Deep Rails framework awareness |

| detect-secrets | Free (OSS) | Secrets (multi-language) | Yelp's baseline approach prevents new secrets while grandfathering existing |

| GitHub CodeQL | Free for public repos | Java, Py, JS/TS, C#, Go, C/C++, Ruby, Swift, Kotlin, Rust | Semantic code queries; free for public GitHub repos |

| Gitleaks | Free (OSS) | Secrets (multi-language) | Popular git-history secret scanner with SARIF + JUnit reporting |

| gosec | Free (OSS) | Go | Go security checker with AI-powered fix suggestions |

| Graudit | Free (OSS) | PHP, Python, Perl, C, ASP, JSP | Lightweight grep-based auditing with custom signatures |

| Horusec | Free (OSS) | 18+ langs incl. Java, Go, Py, K8s | Multi-tool orchestrator with web dashboard |

| Infer | Free (OSS) | Java, C, C++, Obj-C, Erlang, Hack | Meta's inter-procedural analyzer for null derefs and memory leaks |

| Kingfisher | Free (OSS) | Secrets (16 langs via Tree-sitter) | MongoDB's Rust scanner with live validation, Access Map blast-radius, and direct revocation |

| nodejsscan | Free (OSS) | Node.js, JavaScript | Node.js scanner with web UI and fix guidance |

| OpenGrep | Free (OSS) | 30+ langs incl. Py, Java, Go, TS, Rust | Community Semgrep fork restoring taint analysis + Windows support |

| PHPStan | Free (OSS) | PHP | PHP static analysis with 10 progressive strictness levels |

| PMD | Free (OSS) | Java, JS, Apex, Kotlin, Swift, Scala | 400+ rules; includes CPD for duplicate detection |

| Psalm | Free (OSS) | PHP | Vimeo's PHP type checker with built-in taint analysis |

| Semgrep | Free CE + Comm. | C#, Go, Java, JS, Py, Ruby, Scala, TS | Custom rules + secrets + SCA; strong dev-first workflow |

| SonarLint | Free (OSS) | 20+ langs in IDE | Real-time IDE analysis for VS Code, IntelliJ, Eclipse, Visual Studio |

| SpotBugs | Free (OSS) | Java, Kotlin, Groovy, Scala | FindBugs successor; Find Security Bugs plugin (144 vuln types) |

| Trufflehog | Free (OSS) | Secrets (multi-language) | Scans and verifies 800+ secret types across Git, S3, Slack, wikis |

| Commercial (18) | |||

| Checkmarx One | Commercial | 35+ incl. Java, JS, Python, Swift, Go | Unified SAST + SCA + supply chain platform |

| Codacy | Commercial | 40+ incl. Python, Java, JS, Go, Rust | 40+ langs with AI code protection; free for open-source |

| Contrast Scan | Commercial | Java, JS, .NET, Py, Go, PHP, Kotlin | Runtime-informed testing (ADR); Application Detection & Response |

| Corgea | Commercial | 20+ langs | AI-native SAST with auto-fix (BLAST engine); YC-backed |

| Coverity (Black Duck) Leader | Commercial | 22+ incl. C/C++, Java, C#, Go, Kotlin | Deep C/C++ analysis; now under Black Duck (ex-Synopsys) |

| DeepSource | Commercial | Python, Java, Go, JS/TS, Rust, Ruby, PHP | AI-powered SAST with Autofix AI; free tier for open-source |

| GitLab SAST | Commercial | Java, JS/TS, Py, Go, C#, C/C++, Ruby | Built into GitLab CI; Advanced SAST (cross-file taint) in Ultimate |

| HCL AppScan (SAST) | Commercial | 34 langs incl. Dart, Vue.js, React | AppScan 360° 2.0 (2025) with AI-assisted testing |

| Kiuwan | Commercial | 30+ incl. COBOL, Scala, Kotlin | Quality + security combined; owned by Idera |

| Klocwork | Commercial | C, C++, C#, Java, JS, Py, Kotlin | Advanced C/C++ & embedded analysis |

| Mend SAST NEW | Commercial | 25+ langs | Agentic SAST with AI-powered fixes |

| OpenText Fortify SCA | Commercial | 44+ incl. COBOL, ABAP, Fortran | Widest legacy language support (ex-Micro Focus) |

| Parasoft | Commercial | C/C++, Java, .NET | Compliance-first: DO-178C, ISO 26262, MISRA, IEC 62304 |

| PT Application Inspector | Commercial | Java, C#, PHP, JS/TS, Py, Go, C/C++, Kotlin, Swift | SAST+DAST+IAST+SCA with automatic exploit verification |

| Qodana (JetBrains) | Commercial | Java, Kotlin, PHP, Py, JS/TS, C#, Go, C/C++ | JetBrains IDE inspections brought to CI/CD pipelines |

| Snyk Code | Commercial | JS, Java, .NET, Py, Go, Swift, PHP | AI-powered, dev-first with real-time IDE feedback |

| SonarQube | Commercial | 35+ incl. COBOL, Apex, PL/I, RPG | Massive community; CI/CD quality gates |

| Veracode Static Analysis | Commercial | Java, .NET, C/C++, JS, Py, COBOL, RPG | Binary analysis, no source code needed; 100+ languages supported |

| Discontinued / Acquired (2) | |||

| Bearer ACQUIRED | Was Open Source | JS/TS, Ruby, Java, PHP, Go, Py | Data-first SAST with privacy scanning; acquired by Cycode |

| Reshift DEFUNCT | Was Open Source | Node.js | Company defunct; website no longer active |

How Do You Choose the Right SAST Tool?

Choosing the right SAST tool comes down to five factors: language and framework support, CI/CD integration, false positive rate, budget, and developer experience.

The right tool for your team depends on your language stack, pipeline setup, and whether you need free open-source coverage or enterprise features like compliance dashboards and centralized policy management.

Here is what I would look at:

1. Language and framework support. This is the single most important filter.

A tool that does not understand your framework will miss vulnerabilities specific to its patterns, or drown you in false positives from patterns it misunderstands.

Brakeman is the best example: it understands Rails routing, ActiveRecord queries, and ERB templates deeply, but it is Rails-only. Bandit covers Python with 47 built-in checks.

If you use multiple languages, look for multi-language tools. Semgrep CE covers 30+ languages, Checkmarx One covers 35+, and Veracode supports 36+ languages and 100+ frameworks including legacy stacks like COBOL and RPG.

2. CI/CD integration. How easily does it plug into your pipeline?

Look for native support for GitHub Actions, GitLab CI, Jenkins, or Azure DevOps. GitHub CodeQL is the easiest to set up if you are already on GitHub.

It runs as a built-in Actions workflow with zero external configuration. Snyk Code and Semgrep CE both offer well-documented GitHub Actions that upload SARIF results to the code scanning dashboard.

Enterprise tools like Checkmarx and Fortify have plugins for every major CI system, but expect more configuration work upfront.

3. False positive rate. False positives are what kills SAST adoption in practice.

Developers stop looking at findings when half of them are noise. Commercial tools tend to be quieter out of the box because they invest in data flow analysis and ML-based prioritization.

According to Cycode’s published benchmarks, Cycode achieves a 2.1% false positive rate on the OWASP SAST Benchmark.

Open-source tools like Semgrep CE can reach similar precision, but you need to invest time writing custom rules tuned to your codebase.

4. Budget.

Free open-source SAST tools cover most use cases for small and mid-size teams. Semgrep CE handles multi-language scanning with custom rules. Bandit and Brakeman cover Python and Rails specifically.

SonarQube CE provides code quality plus security across 19 languages. CodeQL is free for public repos.

Enterprise tools add centralized reporting, compliance dashboards (PCI DSS, SOC 2, HIPAA mapping), cross-project portfolio views, and dedicated support.

But honestly, the free options have gotten good enough that many teams never upgrade.

5. Developer experience.

IDE integration, clear fix guidance, and fast scan times keep developers from ignoring findings.

Snyk Code does well here with real-time scanning in VS Code, IntelliJ, and PyCharm plus AI-powered fix suggestions from its DeepCode engine. Qodana brings the same JetBrains IDE inspections developers already see locally into the CI/CD pipeline.

In my experience, tools that show findings as inline code annotations in pull requests get far higher fix rates than tools that send email reports to a separate dashboard.

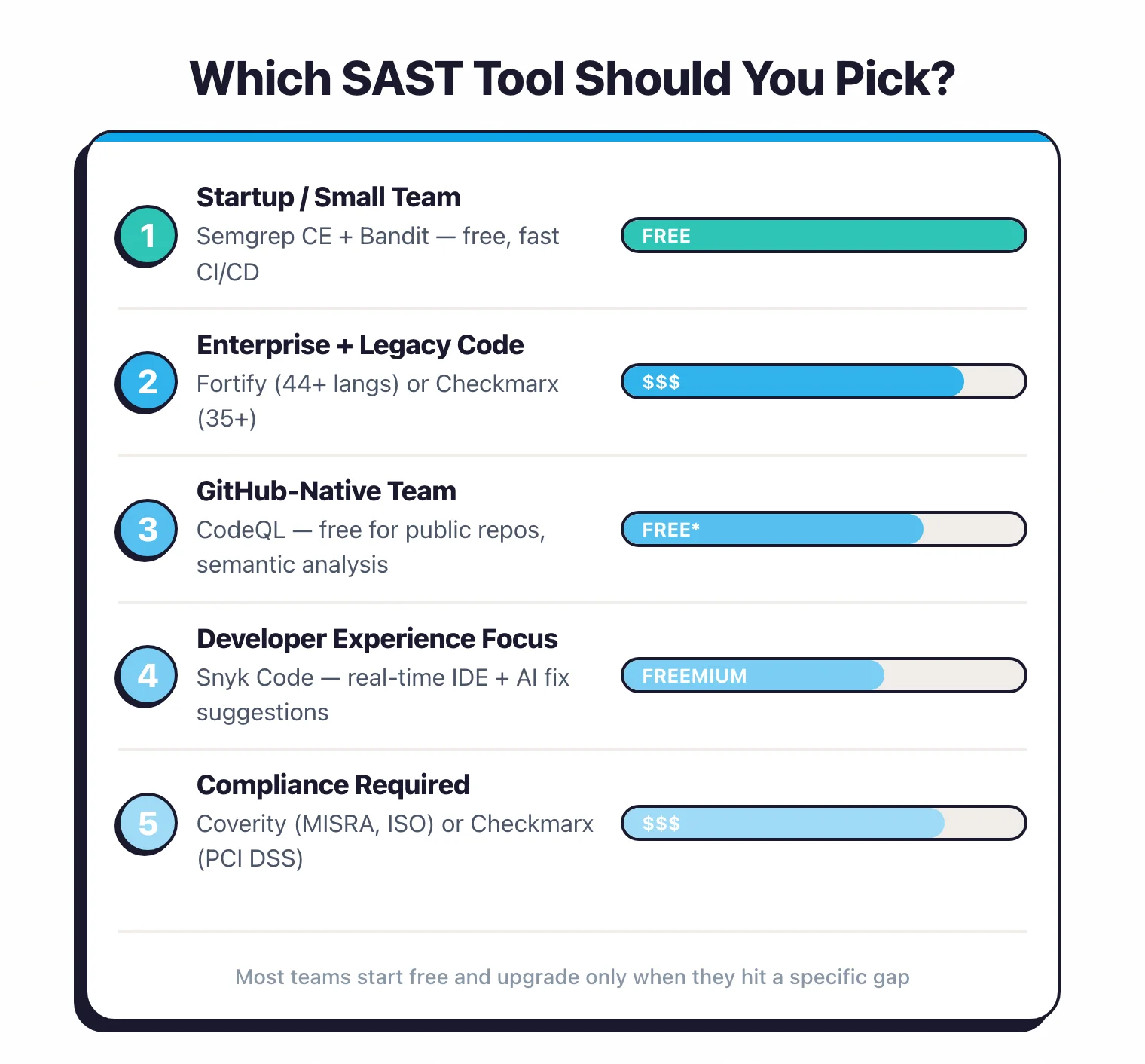

Which SAST Tool Should You Pick?

Match your situation to one row. If you span two, lean toward the harder constraint — compliance always wins over convenience.

| Your situation | Pick | Why |

|---|---|---|

| Startup, <50 devs | Semgrep CE + Bandit | Free, GitHub Actions in 10 minutes, multi-language plus Python depth |

| Enterprise with legacy code | Fortify or Checkmarx One | 44+ / 35+ languages, COBOL/ABAP/Fortran coverage, ASPM correlation |

| Already on GitHub | CodeQL | Free for public repos, native Actions, 12 languages with semantic analysis. Snyk vs GHAS if private |

| Developer buy-in is the bottleneck | Snyk Code | Real-time IDE feedback, AI fix suggestions, PR annotations inside the review flow |

| Compliance audit (PCI DSS / SOC 2 / HIPAA) | Checkmarx One or Fortify | OOTB compliance report templates; PCI DSS 4.0 §6.2.4 and §6.3.2 require this kind of mapping |

| Safety-critical C / C++ (auto, aero, embedded) | Coverity or Klocwork | Deep inter-procedural analysis; MISRA, AUTOSAR C++14, ISO 26262, DISA STIG mapping |

| Python-only stack | Bandit + Semgrep CE | 47 built-in checks for Django/Flask, plus cross-framework custom rules |

| Ruby on Rails monolith | Brakeman | Only deep Rails-aware free SAST; no commercial competitor for Rails-specific patterns |

| Need binary analysis (no source) | Veracode | Scans compiled bytecode across 36+ languages and 100+ frameworks without source access |

For a deeper free-vs-commercial breakdown of the open-source picks, see the open-source SAST tools guide . The enterprise SAST tools guide covers the regulated-environment shortlist in compliance-feature depth.

Best SAST Tools by Programming Language

Pick the wrong scanner for your stack and you ship blind on half your codebase.

A Python-only tool skips the Go services next to it, and a generic multi-language scanner misses the Spring and Rails idioms that decide whether a pattern is actually exploitable.

What you need depends on the languages and frameworks you actually run — not the logo grid on a vendor sales page.

| Language | Best Free SAST Tool | Best Commercial SAST Tool | Why |

|---|---|---|---|

| Java | SpotBugs + PMD | Checkmarx, Fortify | SpotBugs' Find Security Bugs plugin covers 144 vulnerability types for Java/Kotlin. PMD adds 400+ code quality rules. Checkmarx and Fortify offer deep cross-file taint analysis for enterprise Java apps. |

| Python | Bandit | Snyk Code, Veracode | Bandit is purpose-built for Python with 47 security checks including Django and Flask patterns. Snyk Code adds AI-powered fix suggestions with real-time IDE feedback. |

| JavaScript / TypeScript | Semgrep CE, nodejsscan | Snyk Code, Checkmarx | Semgrep CE handles JS/TS with custom rules. nodejsscan is Node.js-specific with Express and Koa framework awareness. Snyk Code and Checkmarx cover React, Angular, and Vue patterns. |

| Go | gosec | Coverity, Snyk Code | gosec is the standard Go security linter — lightweight, fast, integrates with golangci-lint. Coverity adds deep inter-procedural analysis for larger Go codebases. |

| Ruby on Rails | Brakeman | Checkmarx | Brakeman is the gold standard for Rails security — it understands routing, ActiveRecord, and ERB templates deeply. Hard to beat even with commercial tools for Rails-specific scanning. |

| C / C++ | Infer, Semgrep CE | Coverity, Klocwork, Fortify | C/C++ is where commercial SAST tools justify their cost. Coverity and Klocwork have the deepest memory safety, concurrency, and buffer overflow analysis. Infer (Meta) is the strongest free option for null pointer and memory leak detection. |

| C# / .NET | Semgrep CE, SonarQube CE | Checkmarx, Veracode | Semgrep CE added C# support with framework-aware rules. SonarQube CE covers .NET with quality gates. Checkmarx and Veracode offer deep ASP.NET and Entity Framework analysis. |

| Multi-language | Semgrep CE, CodeQL | Checkmarx (35+), Veracode (100+ with frameworks), Fortify (44+) | For polyglot codebases, Semgrep CE (30+ languages) and CodeQL (12 with deep semantic analysis) are the best free options. Veracode leads in commercial breadth with 100+ languages and frameworks combined. |

| Legacy (COBOL, RPG, ABAP) | — | Fortify, Veracode, Kiuwan | No free SAST tools cover COBOL, ABAP, or RPG. Fortify (44+ languages) has the widest legacy support. Veracode scans compiled bytecode without requiring source code access. |

If your team uses a single primary language, start with the language-specific free tool. A dedicated scanner like Brakeman for Rails or Bandit for Python will have better framework coverage than a generic multi-language scanner.

If you run multiple languages across different services, Semgrep CE is the practical choice — one tool, one rule format, 30+ languages. CodeQL is equally strong if you are on GitHub, with deeper semantic analysis across 12 languages.

Add language-specific tools (like Bandit for Python or gosec for Go) on top for deeper coverage where it matters most.

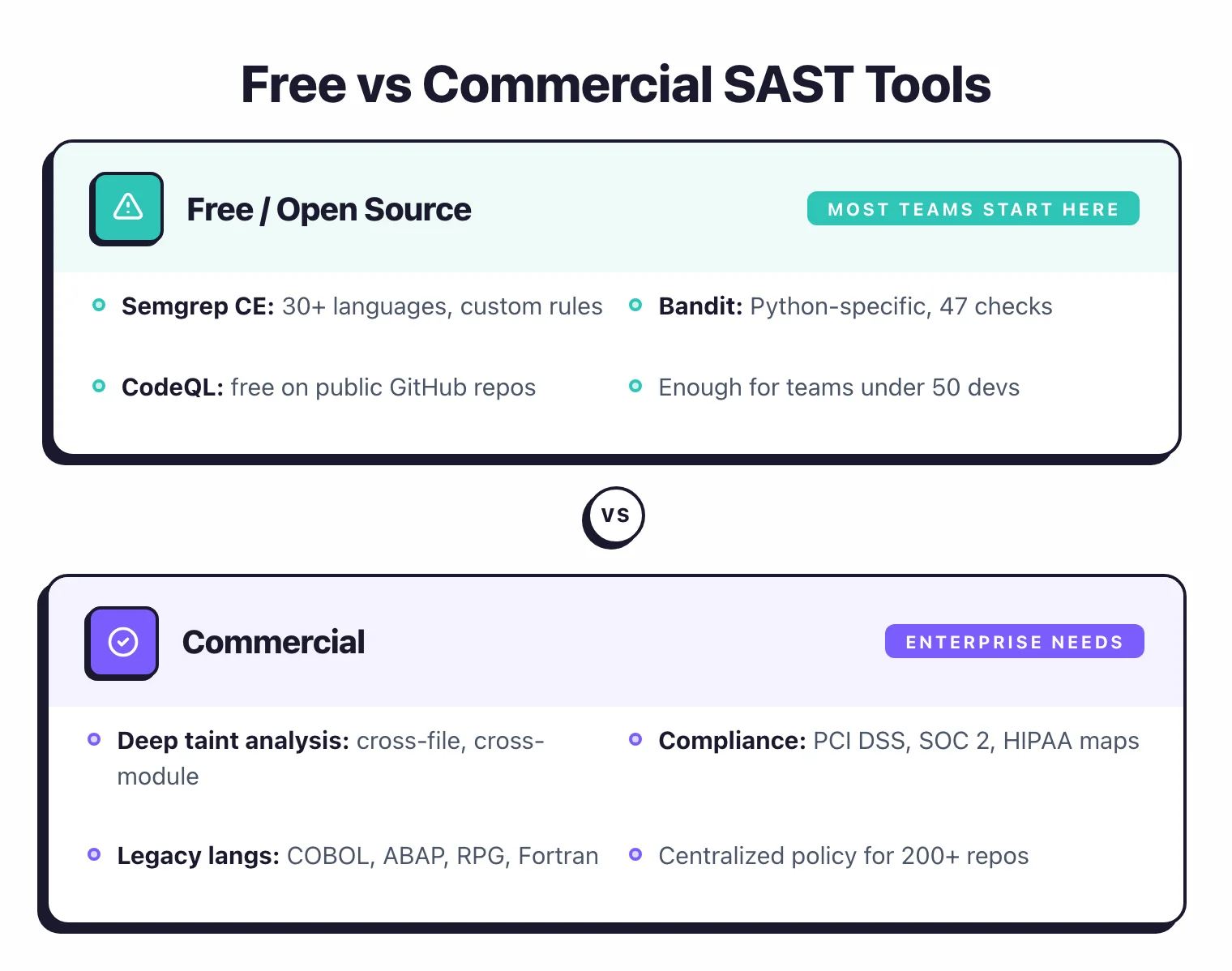

Free vs Commercial SAST Tools

Here is the truth nobody at the commercial vendors wants you to hear: most teams under 50 developers do not need an enterprise SAST license.

Free scanners like Semgrep CE, Bandit, and SonarQube Community Edition catch the obvious bugs in mainstream stacks at zero license cost.

Checkmarx, Coverity, and Fortify earn their price tag on legacy languages, deep cross-file taint, and the audit trails compliance teams actually pay for. The trick is knowing which side you are on.

Free SAST tools are enough when:

- Your team is small (under 50 developers) and moves fast

- You use mainstream languages (Python, JavaScript, Go, Java, Ruby)

- You can invest time writing custom rules for your frameworks

- Compliance reporting is not a hard requirement

- You are comfortable managing tool configuration yourself

A stack of Semgrep CE + Bandit + SonarQube CE covers most codebases at zero license cost. Add CodeQL if you are on GitHub.

Commercial SAST tools justify the cost when:

- Your codebase is large (100K+ LOC) and requires deep cross-file data flow analysis

- Compliance dashboards are required (PCI DSS, SOC 2, HIPAA, MISRA, ISO 26262)

- Your language stack includes legacy code (COBOL, ABAP, RPG, Fortran)

- Multiple teams and repositories share centralized policy management

- Dedicated vendor support and SLAs are a business requirement

- Developers expect AI-powered auto-fix suggestions to speed up remediation

The biggest gap is in taint analysis depth. Free tools like Semgrep CE perform intra-file taint tracking, meaning they analyze data flow within a single file.

Commercial tools like Checkmarx , Coverity , and Fortify trace data flows across files, modules, and even microservice boundaries.

For a 500K-line Java monolith, this is the difference between finding surface-level issues and catching second-order injection vulnerabilities buried three layers deep.

The second gap is false positive management at scale.

When you have 200 repositories and 500 developers, tools like Checkmarx One and Veracode offer centralized suppression rules, finding deduplication, and portfolio-level dashboards that open-source tools simply do not provide.

At that scale, the cost of manually triaging false positives across dozens of teams exceeds the cost of a commercial license.

For a detailed comparison of free options with setup guides and detection benchmarks, see my open-source SAST tools guide .

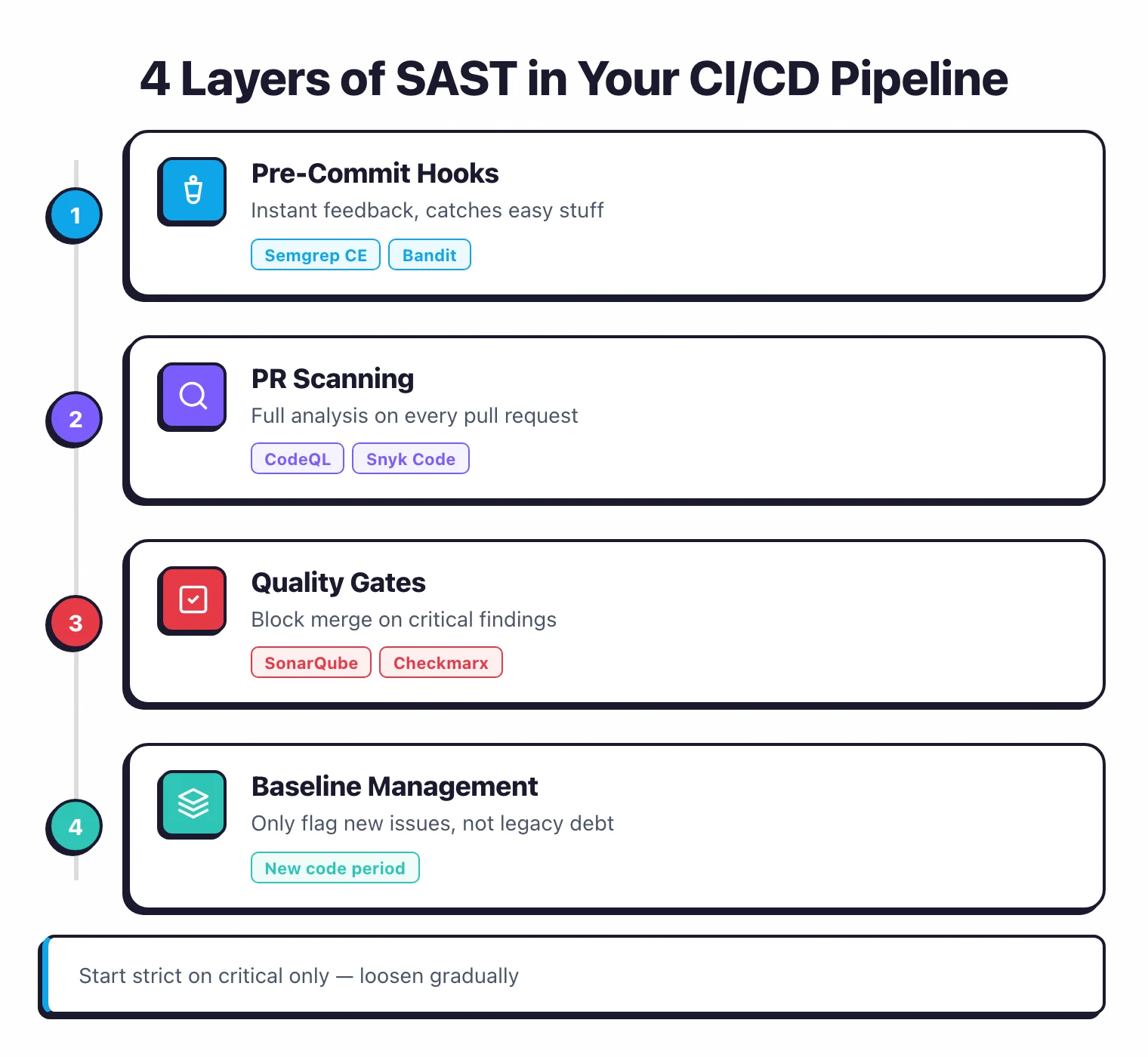

How Do You Integrate SAST into a CI/CD Pipeline?

Most SAST CI/CD setups break in the same place: a 30-minute scan nobody waits for, feeding a backlog nobody triages.

The integration works when you run it in four layers — pre-commit hooks for instant feedback, PR-level scanning for full analysis, quality gates for enforcement, and baseline management for legacy debt.

Skip any layer and the gate either gets bypassed or the queue collapses under its own weight.

The real payoff comes when every pull request gets scanned automatically before it merges. The goal: make security feedback as routine as unit tests.

Developers see findings before code gets approved, not weeks later in a security review.

Pre-commit hooks are the fastest feedback loop. Tools like Semgrep CE and Bandit run in seconds and catch obvious issues before code even leaves the developer’s machine.

Semgrep CE’s CLI scans an average-sized project in under 10 seconds, which makes it practical as a git pre-commit hook without slowing anyone down. This layer is not meant to be comprehensive.

It catches the easy stuff so the heavier scans downstream have less noise to deal with.

Pull request scanning is where most teams get the biggest value. Running a full SAST analysis on every PR through GitHub Actions, GitLab CI, or Jenkins means every code change gets a security review before merge.

Most tools post findings directly as PR comments or inline code annotations, so developers see the issue in context.

GitHub CodeQL does this natively for GitHub repositories, uploading results as code scanning alerts on the pull request’s “Security” tab. Snyk Code and Semgrep CE both offer GitHub Actions that work the same way.

Quality gates add enforcement. Instead of just reporting findings, you block the merge when critical or high-severity vulnerabilities show up. SonarQube has built-in quality gate conditions that check for new security hotspots, and Checkmarx lets you define policies that prevent merging when specific CWE categories are detected.

Start strict only on critical findings and loosen gradually. Blocking on every medium-severity issue will make developers resent the tool.

Baseline management keeps the noise manageable. When you first introduce SAST to an existing codebase, the initial scan will produce hundreds or thousands of findings.

Do not dump all of them on the team. Baseline the existing findings and configure the pipeline to only flag new issues introduced by the current PR.

SonarQube calls this the “new code period.” Bandit supports baseline files that exclude known findings.

Over time, you chip away at the backlog through separate remediation sprints.

Cutting Scan Time: Incremental + Path-Based Triggers

Scan times range from seconds (Semgrep CE, Bandit) to several hours (deep-analysis engines on large repos). The exact-time question is in the FAQ — what matters in CI/CD is keeping the PR-feedback loop under five minutes, because anything slower gets disabled.

Note: A SAST scan that takes 45 minutes on every pull request gets disabled within a week. I have seen it happen. Optimize scan time before tuning rules — developers will not wait.

Three levers move the needle.

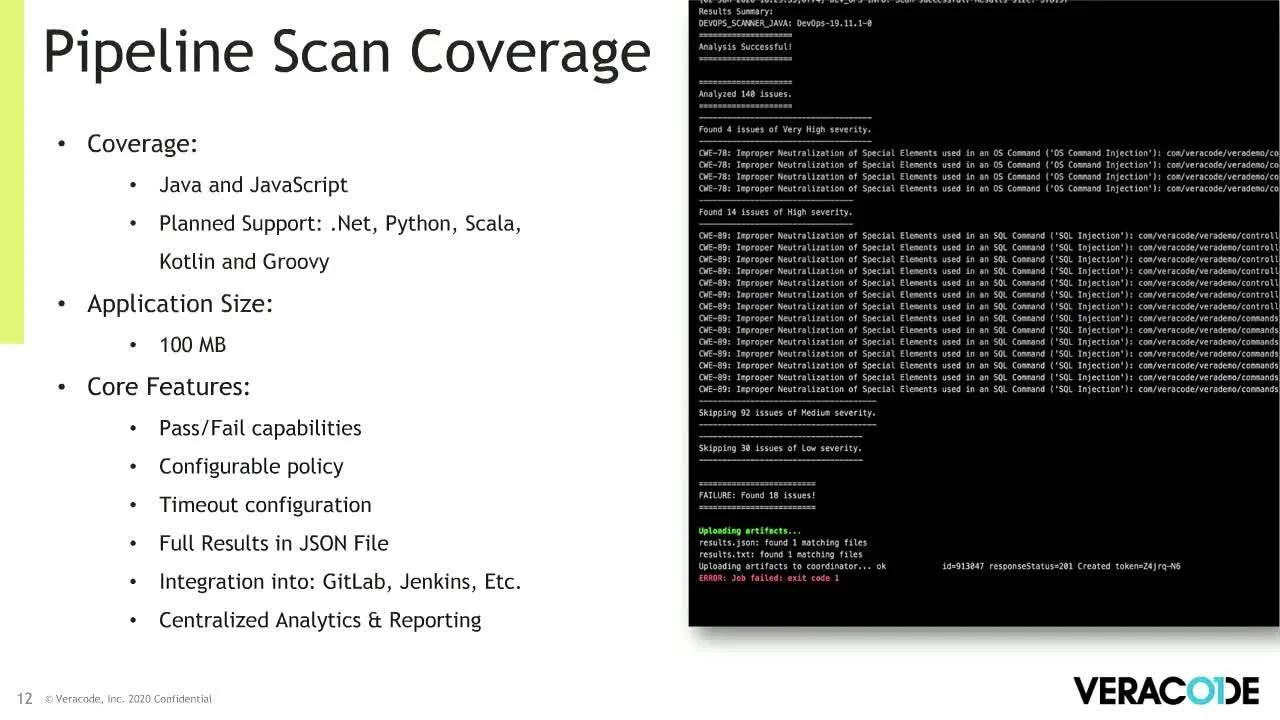

Incremental scanning cuts time 80-90% by analyzing only changed files — Semgrep CE

supports --baseline-commit, Veracode Pipeline Scan

ships with a 90-second median, and Mend SAST

offers Fast/Balanced/Deep profiles.

Path-based triggers in GitHub Actions skip the SAST job entirely when only docs or unrelated services change.

Project-level partitioning in SonarQube and Checkmarx lets a monorepo treat each subdirectory as a separate scan target.

A typical setup runs Semgrep CE on every pull request, uploads SARIF results to GitHub’s code scanning dashboard, and blocks the merge on new critical findings. Total overhead: 30-60 seconds for most repositories.

What Is AI-Powered SAST?

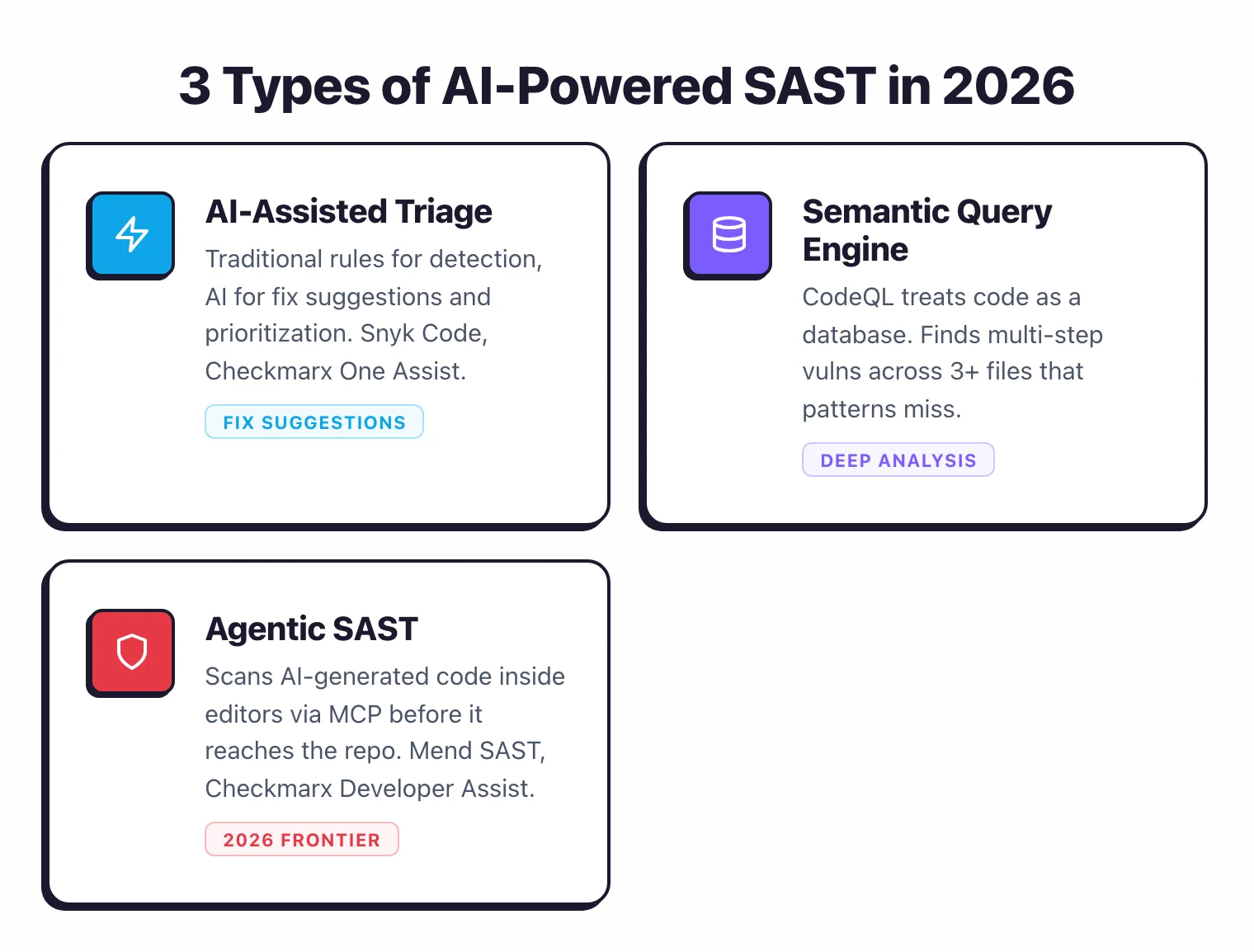

Half the SAST vendor decks in 2026 stamp “AI-powered” on the cover, but capability varies enormously. Three categories matter: AI-assisted triage (deterministic detection engine + AI for remediation — Snyk Code , Checkmarx One Assist , SonarQube AI CodeFix ), semantic query engines (CodeQL compiles code into a queryable graph for complex multi-step vulnerability queries), and agentic SAST (Mend SAST , Checkmarx Developer Assist plug into AI editors via MCP to scan AI-generated code before it reaches the repo).

The detection engine in AI-assisted tools is still deterministic rules and data-flow analysis — AI handles the “what do I do about it?” surface. Semantic query engines can find tainted values flowing through 5 functions across 3 files; pattern matchers cannot. Agentic SAST matters because AI-generated code introduces vulnerabilities at a comparable rate to human code: NYU 2021 found ~40% of Copilot suggestions vulnerable on security-sensitive prompts (Pearce et al. ); Stanford 2023 confirmed the pattern (Perry et al. ).

When evaluating tools in 2026, three questions: does the AI run in the detection engine or just the remediation UI? Does it scan AI-generated code before commit? Does it produce one-click fixes or just generic problem descriptions?

Why SAST Matters in 2026: AI Code + DevSecOps Reality Check

Most production code in 2026 is at least partly AI-assisted, and AI does not write secure code by default. NYU’s 2021 Copilot study generated 1,689 programs across 89 scenarios from MITRE’s Top 25 CWE list — roughly 40% came back vulnerable. Stanford’s 2023 CCS study found developers using AI assistants produced less secure code than the control group across encryption, signing, and SQL handling, and were more likely to believe their code was secure.

OWASP Top 10:2025 still owns these failures — broken access control at A01, security misconfiguration A02, software supply chain A03 — and every one is detectable in source code before deployment . IBM’s 2025 Cost of a Data Breach report pegged the global average at $4.44M (US: $10.22M). SAST is the cheapest way to catch the same bugs that buyers find in pentest reports.

What is SAST and how does it work?

SAST (Static Application Security Testing) analyzes source code, bytecode, or compiled binaries without executing the application — parsing code into an abstract syntax tree, modeling data flow between functions, and matching against vulnerability patterns (SQL injection, XSS, hardcoded secrets, insecure deserialization). Scans run in seconds-to-minutes and integrate into IDEs, pre-commit hooks, and CI/CD pipelines.

Full explainer with detection-method deep-dive (pattern matching vs cross-file taint analysis, abstract interpretation tradeoffs, scan-time vs accuracy curves): What is SAST?

What Are the Best Practices for SAST?

Most SAST failures aren’t bad tools — they’re good tools introduced poorly. The 8 practices that matter:

- Baseline first, then incremental. Full scan once → triage existing findings → switch to PR-only incremental scans so developers only see what they introduced. SonarQube ’s “new code period” and Bandit ’s baseline files handle this natively.

- Own your rules. Default rule sets catch common CWE patterns; your internal frameworks need 10–20 custom rules tailored to your stack. Semgrep CE ’s rule syntax mirrors source code; CodeQL offers more expressive multi-step queries via QL.

- Set severity thresholds that match risk appetite. Block merges on critical/high, warn on medium, ignore informational noise. Document thresholds with engineering and security buy-in.

- Make findings visible where developers work. IDE warnings beat PR comments beat email reports. Snyk Code real-time IDE feedback + CodeQL inline PR annotations win adoption.

- Combine with DAST and SCA. SAST = code, DAST = runtime, SCA = dependencies. A SQL injection found by SAST becomes urgent when SCA confirms the vulnerable ORM. See SAST vs SCA .

- Track fix rates, not finding counts. Mean time to remediate + fix rate + finding density per KLOC. A tool that finds 500 issues nobody fixes is worse than one that finds 50 that all get resolved.

- Build a security champion program. One developer per team owns SAST findings, triages false positives, promotes secure coding internally. Distributes responsibility; prevents AppSec bottleneck.

- Measure finding density and remediation time. Decreasing findings/KLOC = developers writing more secure code (not suppressing). MTTR under 7 days on critical = working program; over 30 days = nobody reads the reports.

What Are the Most Common SAST Mistakes?

The 6 mistakes that kill SAST adoption:

- Running only default rules. Generic CWE patterns miss vulnerabilities in custom ORMs, homegrown session libraries, and framework middleware. 10–15 custom rules for critical code paths significantly improves coverage.

- Ignoring custom framework patterns. Without framework-aware rules, you get both false positives (flagging safe framework-handled patterns) and false negatives (missing real bugs in framework code). Semgrep and CodeQL support custom rules; Checkmarx adds custom sanitizer definitions for data-flow modeling.

- Treating all findings equally. A hardcoded test key in a unit test is not the same as a SQL injection in a production API. Teams that treat every finding as urgent burn out and ignore the tool. ASPM platforms like Checkmarx One and Cycode auto-correlate findings with exposure context.

- Not suppressing known false positives. Repeated noise teaches developers to ignore everything — including real findings. Build a suppression workflow with inline comments (

// nosec,# nosemgrep) or centralized rules; document each suppression. - Scanning only on the main branch. Defeats shift-left. By the time you see findings, code is already in production. Run on every PR — incremental scan cost is trivial versus production-bug cost.

- Not correlating SAST findings with SCA and DAST. A SAST SQL injection in code using a vulnerable ORM (SCA ) reachable from the internet (DAST ) is a confirmed compound risk — not three theoretical findings. Cross-reference at minimum periodically.

2026 SAST Methodology: How I Compare These Tools

I compare SAST tools using publicly available signals: vendor documentation, OWASP Benchmark v1.2 scores, vendor-published false-positive and scan-time numbers, GitHub issues and release notes for OSS scanners, customer case studies, and discussions in support forums.

I do not run my own per-tool false-positive benchmark against a fixed corpus. That work is published by vendors and the OWASP Benchmark project, and I cite those sources directly when referencing specific numbers.

I weigh six dimensions when comparing SAST tools:

- Languages and framework coverage — language count and framework-specific rule depth, taken from vendor docs

- Taint analysis depth — intra-file vs. cross-file vs. inter-procedural, based on documented engine architecture

- CI scan speed — vendor-published scan times and customer case studies (e.g., Veracode Pipeline Scan’s 90-second median )

- False positive rate — vendor-published benchmarks (e.g., Cycode, Veracode) and OWASP Benchmark v1.2 scores

- Pricing transparency — whether the vendor publicly displays pricing on their website

- Audit trail completeness — whether vendor documentation describes SARIF export, suppression-history logging, and PCI DSS / SOC 2 / HIPAA report templates

Tools that require a sales call for pricing information are noted as “contact for pricing” — I do not publish estimates.

Scoring Rubric

I do not publish per-tool numeric scores. The rubric below is the framework I apply when comparing SAST tools — it shows what I weigh and how, so readers can apply the same lens to their own shortlist.

The weights reflect what matters most in practice: false positives kill adoption faster than anything else, which is why detection quality carries the most weight.

| Criterion | Weight | Max Score | What I Look At |

|---|---|---|---|

| Detection quality (taint depth) | 30% | 30 pts | Documented engine architecture (intra-file vs. cross-file vs. inter-procedural); second-order injection coverage; OWASP Benchmark v1.2 results where published |

| False positive rate | 25% | 25 pts | Vendor-published FP benchmarks (Cycode, Veracode), OWASP Benchmark v1.2 scores, and customer-reported noise levels in support forums |

| Language and framework coverage | 20% | 20 pts | Language count from vendor docs; framework-specific rule depth (Rails, Spring, Django, React); legacy language support |

| CI/CD speed | 10% | 10 pts | Vendor-published scan times and customer case studies; incremental scan support; pipeline integration complexity |

| Developer experience | 10% | 10 pts | IDE integration; fix suggestion quality; PR annotation support; onboarding time for a new project |

| Compliance and audit trails | 5% | 5 pts | SARIF export; suppression history logging; PCI DSS / SOC 2 / HIPAA / MISRA report templates |

Under this rubric, tools that excel in detection quality but run slower (like Fortify and Coverity ) come out strong on the heaviest-weighted dimension. Tools like Semgrep CE and Bandit trade taint depth for CI speed and developer experience.

For language-specific shortlists, see the dedicated comparisons: Python , JavaScript and TypeScript , Java , Go , C#/.NET , PHP . Each one compares 6-8 tools tuned for that language’s framework patterns and typical CI/CD setups.

Three Patterns Across the 34 SAST Tools I Track (2026)

Three patterns separate the 34 active SAST tools I track in 2026 — each one is visible in vendor-published benchmarks, OWASP Benchmark v1.2 scores, and the false-positive discussions on support forums and GitHub issues.

Pattern 1 — False positive rates span an order of magnitude across SAST tools

False positive rates in SAST tools span an order of magnitude on the same OWASP Benchmark v1.2 corpus. Cycode reports a 2.1% false-positive rate in its March 2025 next-generation SAST announcement , and Veracode publishes a sub-1.1% figure for its whole-program static analysis.

Pattern-only scanners without custom rules land much higher — often 20-40% on framework-heavy code.

Tools with cross-file taint tracking (Snyk Code, Checkmarx, Coverity, Veracode) usually sit in the single-digit to mid-teens range out of the box, and drop further once you tune rules to reduce SAST false positives .

The lesson: ignore the headline FP number in vendor decks. Re-test on your own framework. A scanner with 5% FP on Spring Boot can hit 25% on Django.

Pattern 2 — Scan-time spread across SAST tools is even wider than the FP spread

Static analysis scan times span four orders of magnitude on the same codebase, depending on tool depth.

On a 100K-LOC Java codebase, lightweight scanners (Bandit, gosec, Semgrep CE) finish in seconds to a couple of minutes.

Deep-mode commercial scans (Fortify SCA, Coverity) run from tens of minutes to several hours, and gigabyte-scale codebases can stretch into days per Fortify’s own performance guide . Veracode Pipeline Scan publishes a 90-second median scan time as the engineered middle-ground for CI/CD.

This matters because PR-blocking quality gates need sub-five-minute scans to be tolerated by developers. Above that, the gate gets bypassed or the team disables the merge block.

Pattern 3 — Cross-file taint tracking is the moat that separates commercial SAST tools

Cross-file (inter-procedural) taint tracking is the single capability that separates commercial SAST tools from open-source pattern scanners.

Of the 34 active tools I track, only eight do full inter-procedural taint analysis: Semgrep Pro , Snyk Code , CodeQL , Checkmarx , Coverity , Fortify , Veracode , and Infer via bi-abduction .

The intra-procedural pack — Bandit, Brakeman, gosec, SpotBugs, PMD — sees a function but mostly cannot reason about its caller.

If your threat model includes second-order SQL injection, stored-XSS-via-database, or any bug where source and sink live in different files, intra-procedural alone is not enough. That is the single biggest reason the commercial tier exists.

Frequently Asked Questions

What is SAST (Static Application Security Testing)?

What is the difference between SAST and DAST?

Which SAST tool is best for enterprise codebases?

How do I reduce false positives in SAST?

Can SAST tools be integrated into CI/CD pipelines?

What is the best SAST tool in 2026?

Which SAST tool supports the most programming languages?

How long does a SAST scan take?

Is SAST enough for application security?

What is the best free SAST tool in 2026?

Which SAST tools are open source?

What are the best SAST tools for Python?

Can SAST replace DAST?

How accurate are SAST tools?

Related SAST Resources

Explore Other Categories

SAST covers one aspect of application security tools. Browse other categories below.

Written & maintained by

Suphi CankurtEight years on the vendor side of application-security sales — thousands of evaluations and demos. I started AppSec Santa in 2022 to put that insider view to work for buyers. Independent of any vendor, paid by none, and honest about what fits whom.