AI security is a double-edged problem. On one side, AI systems themselves are vulnerable — LLMs can be tricked with prompt injection, AI-generated code ships with exploitable flaws, and the model supply chain is a growing attack surface.

On the other side, attackers are using AI to make phishing more convincing, deepfakes more realistic, and vulnerability exploitation faster. This page covers both sides.

I pulled data from 15+ industry reports, academic papers, and government frameworks (IBM, OWASP, Gartner, HiddenLayer, Snyk, Google DeepMind, MITRE ATLAS, and others) published in 2024–2026. I also added findings from two original studies I ran in early 2026, and every statistic links to its source.

For related data, see my Software Vulnerability Statistics and Supply Chain Attack Statistics pages.

Key statistics at a glance#

AI-generated code vulnerabilities#

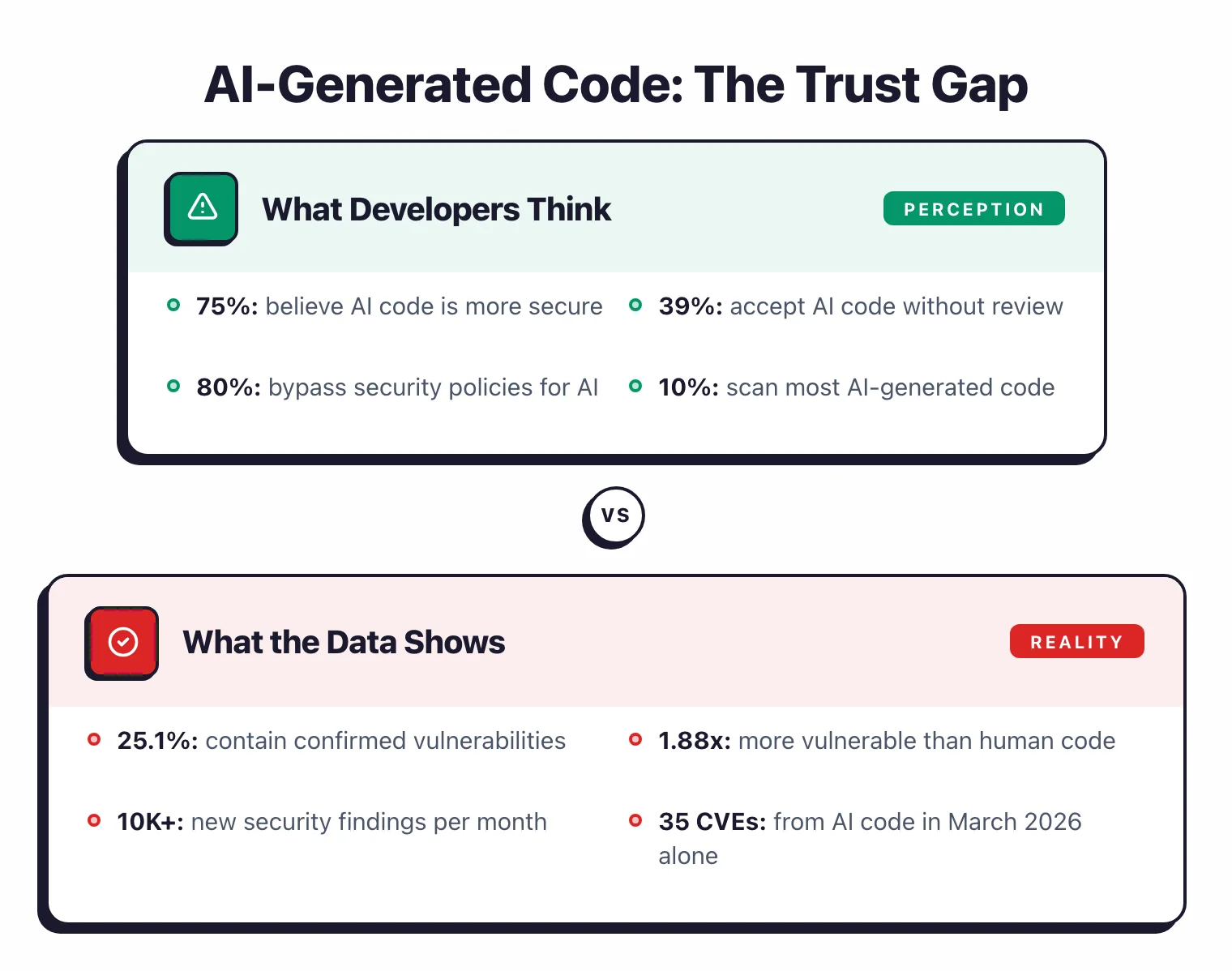

AI coding assistants are writing a growing share of production code. The security of that code is worse than most developers think.

How vulnerable is AI-generated code?#

- I tested 522 code samples from six LLMs and found a 25.7% vulnerability rate — roughly one in four samples contained a confirmed flaw — AppSec Santa AI Code Study 2026

- AI-generated code is 1.88x more likely to introduce vulnerabilities than human-written code — Georgia Tech Vibe Security Radar 2025

- GitHub Copilot produces problematic code approximately 40% of the time in security-sensitive contexts — Pearce et al., ACM/TOSEM 2025

- AI-generated code introduced over 10,000 new security findings per month as of June 2025, a 10x increase from December 2024 — Infosecurity Magazine 2025

- At least 35 new CVEs were disclosed in March 2026 alone due to AI-generated code, up from 6 in January — Georgia Tech 2026

The developer trust gap#

- 75% of developers believe AI code is more secure than human code, yet 56% admit AI suggestions sometimes introduce security issues — Snyk 2025

- Nearly 80% of developers admitted to bypassing security policies when using AI coding tools — Snyk 2025

- Less than 25% of developers use SCA tooling to check AI-generated code before using it; only 10% scan most AI code — Snyk 2025

- Python showed higher vulnerability rates (16-18.5%) than JavaScript (8.7-9.0%) and TypeScript (2.5-7.1%) across AI generators — ACM/TOSEM 2025

AI coding tool adoption#

AI coding assistants went from novelty to default tooling in under three years. The installed base is massive.

- GitHub Copilot reached ~20 million total users by July 2025 and 4.7 million paid subscribers by January 2026 (~75% YoY growth) — GitHub/Panto 2026

- 90% of Fortune 100 companies have adopted GitHub Copilot — GitHub 2025

- AI coding assistants now generate 46% of code written in enabled files — GitHub 2025

- The AI coding tools market is projected to grow from ~$4-5 billion (2025) to $12-15 billion by 2027 at 35-40% CAGR — Panto/Index.dev 2026

Prompt injection and LLM attacks#

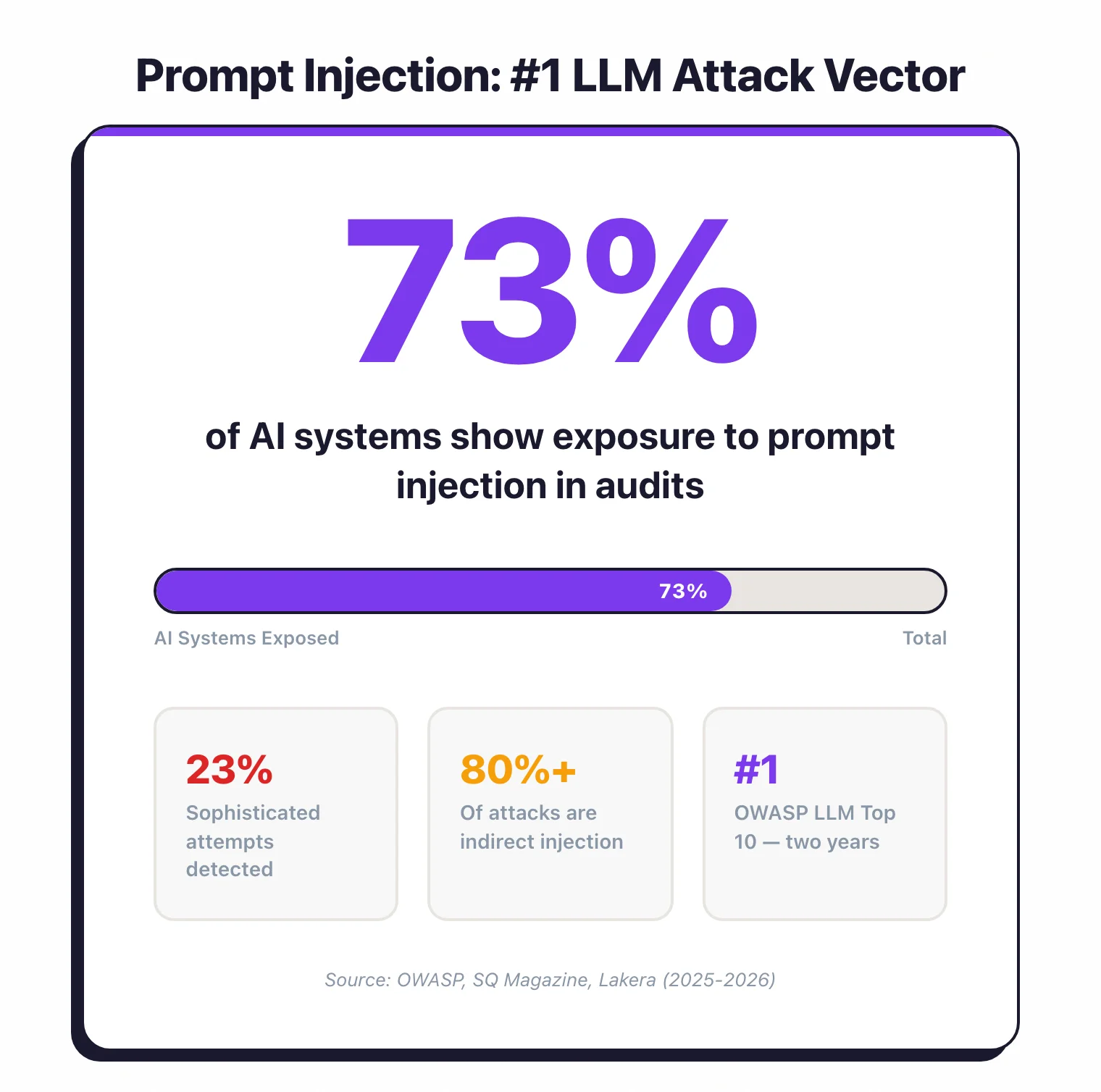

Prompt injection is the SQL injection of the AI era. It’s easy to pull off, hard to defend against, and it’s the most common attack vector against LLM applications.

How prevalent is prompt injection?#

- Prompt injection holds the #1 spot in OWASP’s Top 10 for LLM Applications for two consecutive editions (2024 and 2025) — OWASP 2025

- 73% of AI systems assessed in security audits showed exposure to prompt injection vulnerabilities — SQ Magazine 2026

- Attack success rates range between 50% and 84% depending on model configuration — MDPI Information Journal 2025

- Current detection methods catch only 23% of sophisticated prompt injection attempts — SQ Magazine 2026

- Indirect prompt injection now accounts for over 80% of documented attack attempts in enterprise contexts — Lakera/Obsidian 2025

Package hallucination and slopsquatting#

- 19.7% of packages recommended by AI code generators are hallucinated (non-existent) across 756,000 samples — USENIX Security 2025

- 43% of hallucinated package names are repeated across queries, making them predictable targets for slopsquatting attacks — USENIX Security 2025

- 38% of hallucinations are conflations of two real packages, 13% are typo variants, 51% are pure fabrications — Help Net Security 2025

AI breach landscape#

AI breaches are no longer theoretical. The data shows they’re happening at scale, and most organizations aren’t ready.

- 74% of IT leaders say they definitely experienced an AI-related breach in the past year — HiddenLayer 2025

- 89% of IT leaders state AI models in production are critical to their organization’s success — HiddenLayer 2025

- 96% of companies are increasing AI security budgets in 2025, but over 40% allocated less than 10% of total budget — HiddenLayer 2025

- 76% of organizations report ongoing internal debate about which teams should own AI security — HiddenLayer 2025

- 97% of AI-breached organizations lacked proper access controls on their AI systems, and 63% had no AI governance policies at all — IBM 2025

- IBM X-Force observed a 44% increase in attacks exploiting public-facing applications, largely driven by AI-enabled vulnerability discovery — IBM X-Force 2026

- Infostealer malware exposed over 300,000 ChatGPT credentials in 2025 — IBM X-Force 2026

Agentic AI and MCP security#

Agentic AI systems — where AI models autonomously call tools, browse the web, and execute code — create attack surfaces that traditional security models weren’t designed for.

- 83% of organizations planned agentic AI deployments, but only 29% felt ready to do so securely — Cisco 2025

- MCP-related vulnerabilities grew 270% from Q2 to Q3 in 2025; 95 CVEs filed in 2025 alone (near zero before 2025) — CyberSecStats 2026

- Over 30 CVEs targeting MCP servers, clients, and infrastructure were filed in January–February 2026 alone, including a CVSS 9.6 RCE flaw — MCP Security Research 2026

- Of 7,000+ MCP servers analyzed, 36.7% were vulnerable to SSRF — Wallarm 2026

- 1 in 8 reported AI breaches is now linked to agentic AI systems — HiddenLayer 2026

- Nearly 49% of organizations are entirely blind to machine-to-machine traffic and cannot monitor AI agents — CyberSecStats 2026

- For every verified MCP server in registries, there are up to 15 lookalike servers from unverified sources — Security Boulevard 2026

For my own testing of MCP server security, see the MCP Server Security Audit 2026 .

AI model supply chain#

Just like software packages, AI models are shared through public registries. And just like npm, those registries contain malicious content.

- Over 1 million new models were uploaded to Hugging Face in 2024, with a 6.5x increase in malicious models — JFrog 2025

- Out of 4.47 million model versions scanned, 352,000 unsafe or suspicious issues were found across 51,700 models — Protect AI 2025

- 23% of the top 1,000 most-downloaded models on Hugging Face had been compromised at some point — Industry Research 2025

- 4.42% of all CVEs are now AI-related, up from 3.87% in 2024 — a 34.6% year-over-year increase — CyberSecStats 2026

- Poisoning just 3% of training data can yield 12-41% attack success rates in code-generation models — arXiv 2025

AI-powered phishing and deepfakes#

AI hasn’t just changed defense. It has changed offense too, and the attacker-side gains are alarming.

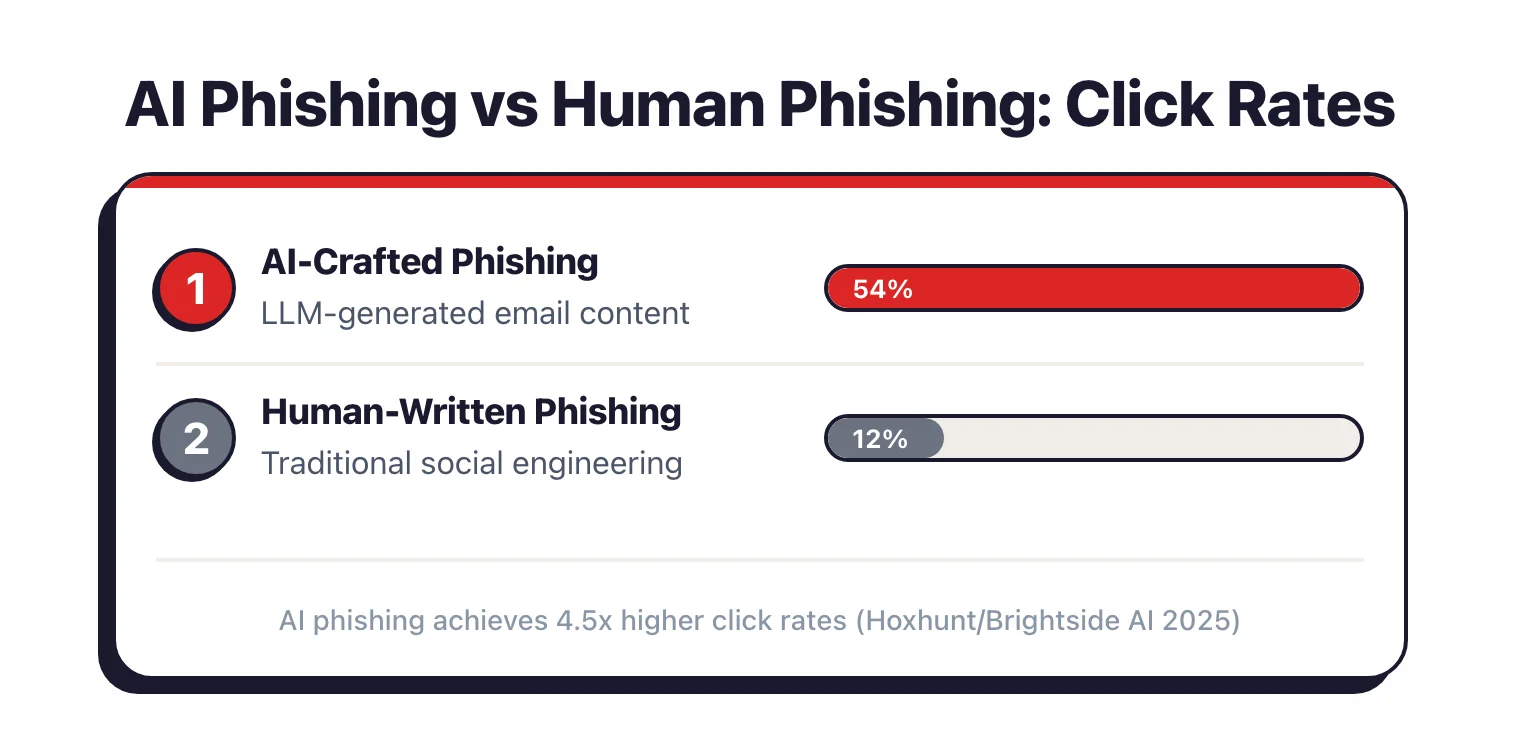

AI phishing#

- AI-crafted phishing emails achieved 54% click rates compared to 12% for human-written ones — Brightside AI/Hoxhunt 2025

- 82.6% of phishing emails detected between September 2024 and February 2025 utilized AI, a 53.5% year-on-year increase — Keepnet Labs 2025

- AI indicators in phishing emails surged from 4% in November 2025 to 56% in December 2025 — Hoxhunt 2026

- 63% of cybersecurity professionals cite AI-driven social engineering as the top cyber threat in 2026 — StrongestLayer 2026

Deepfake fraud#

- Deepfake-related fraud losses in the US reached $1.1 billion in 2025, tripling from $360 million in 2024 — Surfshark 2025

- Executive impersonation deepfakes caused $217 million in fraudulent transfer losses — Security Magazine 2025

- Generative AI-facilitated fraud losses projected to climb from $12.3 billion (2023) to $40 billion by 2027 at 32% CAGR — Experian/Fortune 2026

Shadow AI and governance#

When employees use AI tools outside company policy, they create blind spots that security teams can’t protect.

- 57% of employees use personal GenAI accounts for work; 33% admit inputting sensitive information into unapproved tools — Gartner 2025

- 46% of organizations reported internal data leaks through generative AI employee prompts — Cisco 2025

- Only 37% of organizations have AI governance policies in place; 63% operate without guardrails — ISACA/Vectra 2025

- 69% of organizations suspect employees use prohibited public GenAI tools — Lasso Security 2026

- One in five organizations (20%) suffered a shadow AI breach, adding an average of $670,000 to breach costs — IBM 2025

- Gartner predicts 40% of AI data breaches will stem from cross-border GenAI misuse by 2027 — Gartner 2025

AI in security defense#

The same technology creating new risks is also proving useful on the defense side. The numbers are encouraging.

- Organizations using security AI and automation extensively save an average of $1.9 million per breach — IBM 2025

- AI and automation cut the breach lifecycle by an additional 80 days compared with organizations that do not use them — IBM 2025

- The global average breach lifecycle dropped to 241 days in 2025, the lowest level in nearly a decade — IBM 2025

- Trail of Bits reports 20% of all bugs reported to clients are now initially discovered by AI-augmented auditors — Trail of Bits 2026

- Google DeepMind analyzed over 12,000 real-world attempts to use AI in cyberattacks across 20 countries, identifying 7 archetypal attack categories — DeepMind 2025

- MITRE ATLAS framework (v5.1.0, November 2025) now documents 16 tactics, 84 techniques, 56 sub-techniques, and 42 real-world AI attack case studies — MITRE ATLAS

- Gartner predicts AI agents will reduce the time to exploit account exposures by 50% by 2027 — Gartner 2025

Market and predictions#

AI security is one of the fastest-growing segments in cybersecurity.

- AI in cybersecurity market valued at $29.64 billion in 2025, projected to reach ~$234 billion by 2032 at 31.7% CAGR — Fortune Business Insights 2025

- AI red teaming services market projected to grow from $1.75 billion (2025) to $6.17 billion by 2030 at 28.5% CAGR — Research and Markets 2026

- Global information security spending estimated at $240 billion in 2026, up 12.5% — Gartner 2025

- By 2028, 50% of enterprise cybersecurity incident response efforts will focus on AI-driven application incidents — Gartner 2026

- EU AI Act penalties reach up to 35 million euros or 7% of global annual turnover for non-compliance — European Commission 2024

For AI security tools that address these risks, see my category comparison.

My own research#

I ran two original studies in early 2026 that directly address AI security.

AI-generated code security#

I tested 522 code samples from six LLMs (GPT, Claude, Gemini, DeepSeek, Llama, Grok) using five SAST tools (four open-source plus CodeQL). The 25.7% vulnerability rate is lower than the ~40% found by earlier academic studies, possibly reflecting model improvements since 2021.

The most common weaknesses were CWE-918 (SSRF) at 32 findings and CWE-22/23 (path traversal) at 30. Full findings: AI-Generated Code Security Study 2026 .

MCP server security#

I audited 33 MCP servers using YARA rules and mcp-scan, finding 27 YARA detections and 116 mcp-scan findings. After manual review, ~78% turned out to be false positives.

The real issues were concentrated in a handful of servers with overly broad filesystem access and unauthenticated tool execution. Full findings: MCP Server Security Audit 2026 .

Sources & methodology#

Every number on this page links to a published report, academic paper, or vendor study. If I cannot trace a statistic to a primary source, I do not include it.

Academic research:

- Pearce et al. (2025) — ACM/TOSEM empirical study of Copilot code security

- Georgia Tech Vibe Security Radar (2025) — AI code vulnerability rates

- USENIX Security (2025) — Package hallucination and slopsquatting study

- arXiv (2025) — Scaling trends for data poisoning in code-generation models

Standards and frameworks:

- OWASP Top 10 for LLM Applications 2025

- MITRE ATLAS v5.1.0 — adversarial threat landscape for AI systems

Industry reports:

- IBM Cost of a Data Breach Report 2025 — latest IBM/Ponemon study covering 600+ breached organizations across 17 industries

- IBM X-Force Threat Intelligence Index 2026

- HiddenLayer AI Threat Landscape Report 2025

- HiddenLayer AI Threat Landscape Report 2026

- Snyk AI Code Security Report 2025

- Cisco State of AI Security 2025

- Google DeepMind Cybersecurity Threat Evaluation 2025

- Gartner AI Security Predictions (2025-2026)

- Hoxhunt Phishing Trends Report 2026

Original research (AppSec Santa):

- AI-Generated Code Security Study 2026 — 522 code samples, 6 LLMs, 5 SAST tools

- MCP Server Security Audit 2026 — 33 MCP servers, YARA + mcp-scan analysis