Protect AI Guardian is an AI security gateway that scans ML models for malicious payloads and enforces security policies before models reach production.

Guardian was built by Protect AI, a Seattle-based company backed by threat intelligence from huntr, an early AI/ML bug bounty platform with 17,000+ security researchers.

As of April 2025, Guardian has scanned over 4 million models on Hugging Face through its partnership with the platform.

Palo Alto Networks completed its acquisition of Protect AI in July 2025, integrating Guardian into the Prisma AIRS platform alongside two companion products: Recon (automated AI red teaming) and Layer (runtime security monitoring).

What is Protect AI Guardian?

Guardian addresses a specific problem: ML models are executable code, and organizations download them from public repositories on trust. Pickle files can execute arbitrary code during deserialization.

Model architectures can contain hidden layers that act as backdoors. Even formats marketed as safe, like safetensors, warrant verification.

Guardian sits between your model sources (Hugging Face, MLflow, S3, SageMaker, Git repositories) and your production environment.

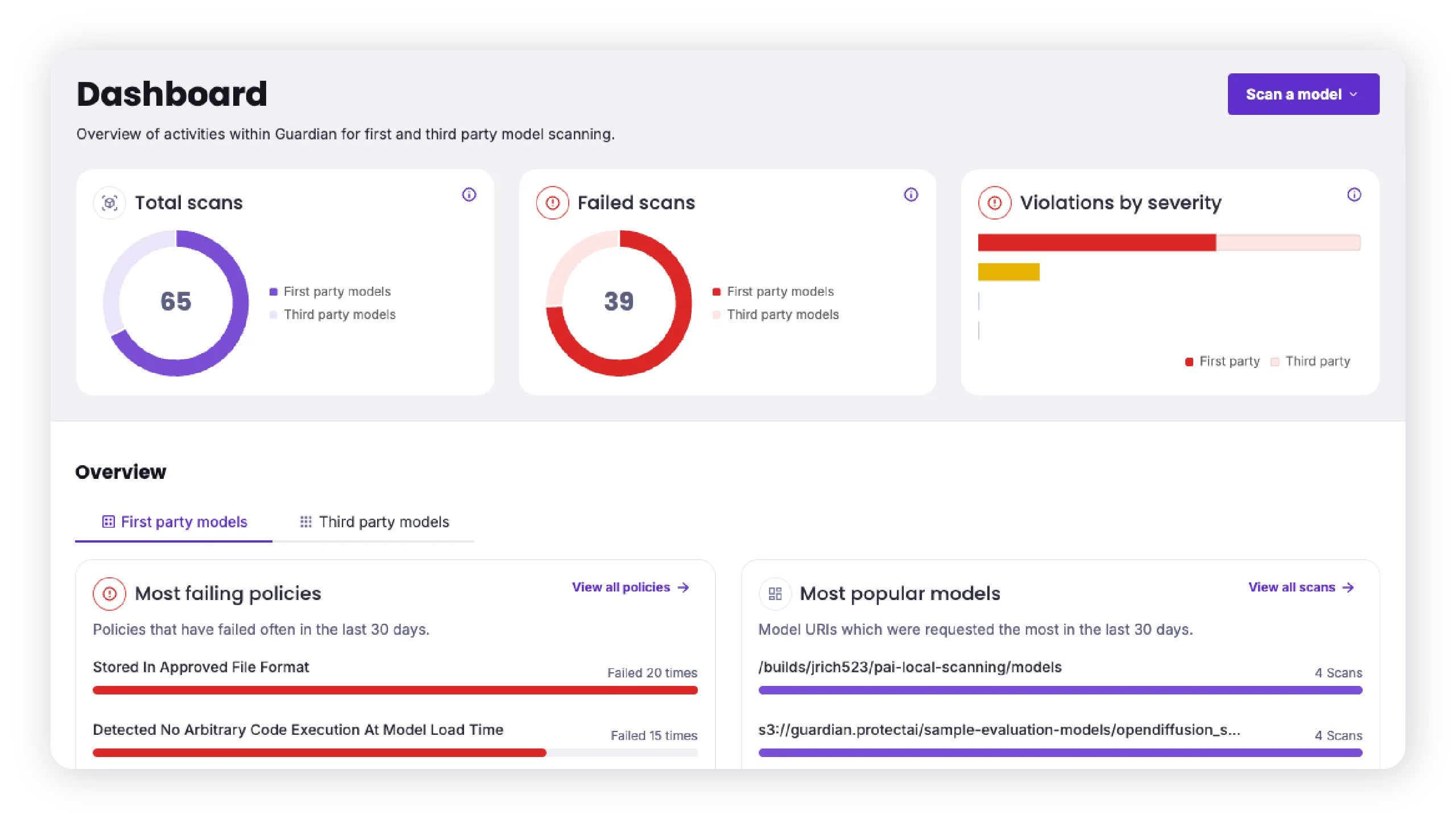

It scans every model against 35+ format-specific checks, applies your security policies, and blocks anything that doesn’t pass.

The platform builds on ModelScan, Protect AI’s open-source model scanner (Apache 2.0 license, 640+ GitHub stars).

Guardian extends ModelScan with configurable policies, access controls, compliance dashboards, and enterprise integrations.

Key Features

| Feature | Details |

|---|---|

| Model Formats | 35+ including PyTorch, TensorFlow, ONNX, Keras, pickle, joblib, dill, GGUF, GGML, safetensors |

| Threat Detection | Deserialization attacks, architectural backdoors, malicious operations, runtime threats |

| Policy Engine | Granular rules for formats, sources, metadata, and scan findings |

| Hugging Face Scanning | 4M+ public models scanned continuously |

| Deployment Options | Cloud platform, local scanning via Docker containers, CLI, SDK |

| Integrations | Hugging Face, MLflow, S3, SageMaker Model Registry, Git, Dataiku |

| Open-Source Base | Built on ModelScan (Apache 2.0, 640+ stars) |

| Threat Intelligence | Powered by 17,000+ huntr security researchers |

Scanning capabilities

Guardian scans models across four threat categories:

- Deserialization attacks — Detects code execution payloads embedded in pickle, joblib, dill, and cloudpickle files. These are the most common ML supply chain attack vector.

- Architectural backdoors — Identifies hidden layers or modified weights that could alter model behavior without changing visible outputs.

- Malicious operations — Flags suspicious TensorFlow Lambda layers, PyTorch operations, and other framework-specific threats.

- Supply chain risks — Tracks model provenance, dependencies, and licensing to identify upstream risks.

Policy enforcement

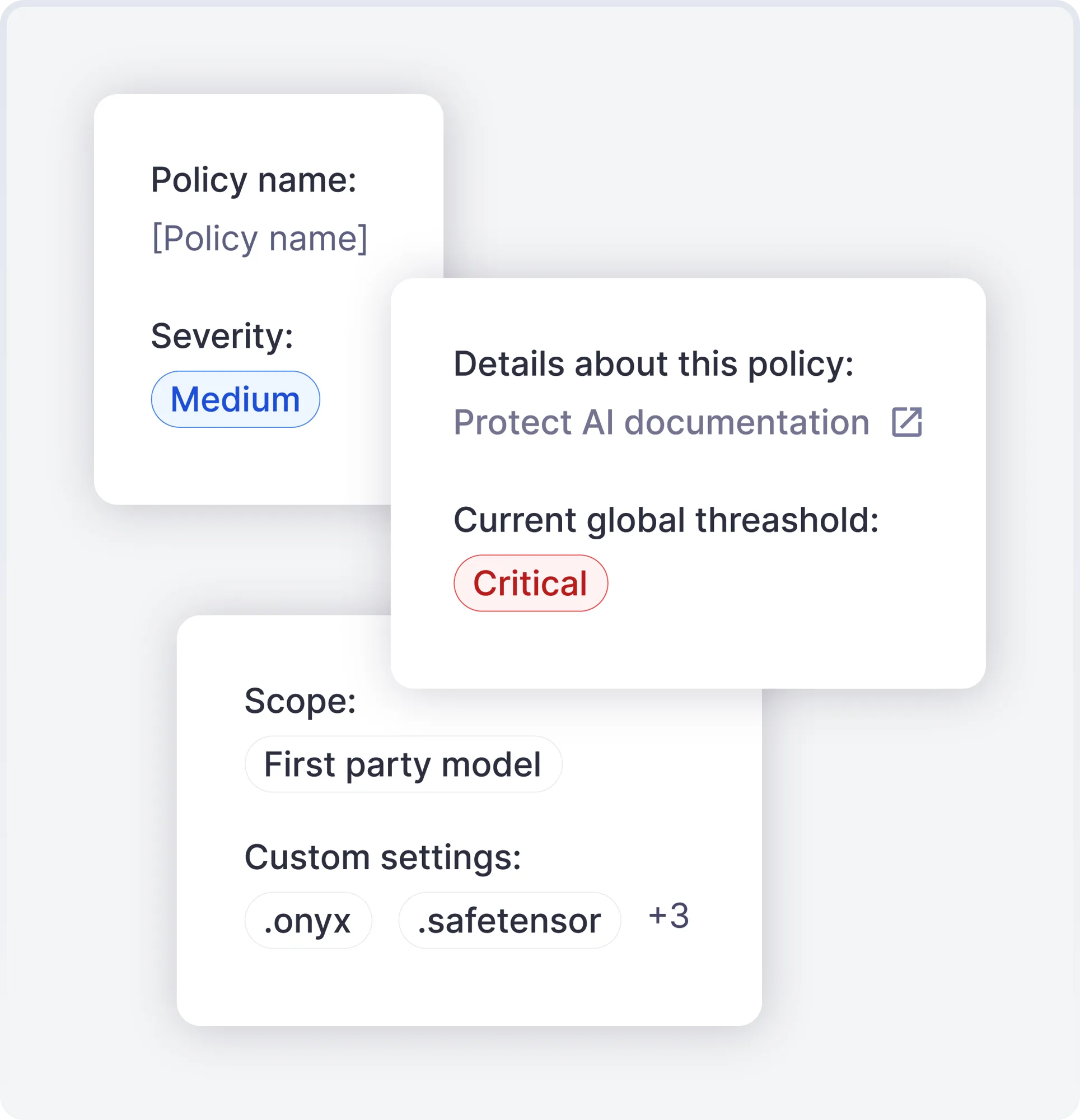

Guardian lets you define security policies with granular rules. Policies can specify approved model formats, required source verification, severity thresholds, and custom rules based on scan findings. Models that violate policies are blocked from deployment.

Policies are configurable per security group, allowing different rules for development, staging, and production environments.

Deployment options

Guardian offers three deployment methods:

- Cloud platform — Managed scanning through the Guardian web console and API

- Local scanner — Lightweight Docker container for CI/CD pipelines and air-gapped environments

- CLI and SDK — The

guardian-clientPython package (Apache 2.0) provides both command-line and programmatic access

The local scanner is particularly useful for organizations that can’t send model files to external services. It runs as a Docker container and integrates into existing CI/CD workflows.

ModelScan is Protect AI’s open-source model scanner. It supports PyTorch, TensorFlow, Keras, Scikit-learn, and XGBoost formats.

Install with pip install modelscan and scan with modelscan -p /path/to/model.pkl. Guardian extends ModelScan with policy enforcement, access controls, and enterprise features.

huntr bug bounty community

Guardian’s threat intelligence comes from huntr, an early AI/ML bug bounty platform. The community includes 17,000+ security researchers who discover vulnerabilities in open-source AI/ML tools.

Huntr is one of the world’s five largest Certified Naming Authorities (CNAs) for CVEs. Vulnerability findings flow back into Guardian’s scanning rules.

Getting Started

guardian-client scan), or SDK. The CLI returns exit code 0 for passed scans, 1 for policy violations, and 2 for failures — making it easy to integrate into CI/CD gates.When to use Guardian

Guardian is built for organizations that consume ML models from external sources and need to verify them before deployment.

If your team downloads models from Hugging Face, maintains internal model registries, or purchases models from vendors, Guardian adds a security gate that catches threats traditional tools miss.

The local scanning option makes it practical for air-gapped environments and regulated industries where model files can’t leave the network.

For background on AI supply chain threats, see the AI security guide. For open-source model scanning without the enterprise platform, use ModelScan directly.

For broader AI security covering runtime defense and adversarial attacks, look at HiddenLayer.

For prompt injection detection, see Lakera Guard or LLM Guard. For LLM red teaming, try Garak or Promptfoo.