Promptfoo is an open-source CLI tool for testing, evaluating, and red teaming LLM applications. It’s in the AI security category with 13,200 GitHub stars, 907 forks, and 255 contributors.

The project is written in TypeScript (96.6% of the codebase) and published under the MIT license. It was originally built for production systems serving 10+ million users.

Promptfoo now has 300,000+ developers and is used by 127 Fortune 500 companies. The company offers a commercial tier with SOC2 and ISO 27001 certification alongside the free open-source tool.

On March 9, 2026, OpenAI announced it is acquiring Promptfoo. The team, including co-founders Ian Webster and Michael D’Angelo, will join OpenAI.

Promptfoo had raised an $18.4M Series A in July 2025 led by Insight Partners with a16z participation. The future of the open-source project post-acquisition has not been clarified.

What is Promptfoo?

Promptfoo treats prompts as testable code. You define test cases with expected behaviors in YAML, run them against any LLM provider, and get structured reports showing what passed and what failed.

It handles evaluation (testing prompt quality and comparing models), red teaming (scanning for 50+ vulnerability types), guardrails, and code scanning. Evaluations run locally — prompts never leave your machine unless you send them to an LLM provider.

Key Features

| Feature | Details |

|---|---|

| Red Teaming | 50+ vulnerability types, automated attack generation |

| Evaluation | Assertions, rubrics, side-by-side comparison |

| Providers | OpenAI, Anthropic, Azure, Google, Bedrock, Ollama, Hugging Face, custom REST |

| Guardrails | Real-time protection against jailbreaks and adversarial attacks |

| Code Scanning | LLM vulnerability detection in IDE and CI/CD |

| MCP Proxy | Secure proxy for Model Context Protocol communications |

| Configuration | YAML-based (promptfooconfig.yaml) |

| Output | Web UI, JSON, CSV |

| Install Methods | npm, npx, pip, Homebrew |

| Certifications | SOC2, ISO 27001, HIPAA (commercial tier) |

Evaluation framework

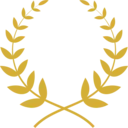

Promptfoo supports multiple assertion types for validating LLM outputs: exact match, substring checks, JSON schema compliance, semantic similarity, regex patterns, and LLM-graded rubrics.

The comparison view displays results side-by-side across providers with metrics like response time, token usage, and assertion pass rates. This is practical for benchmarking models or optimizing prompts for cost and accuracy before switching providers.

Red teaming

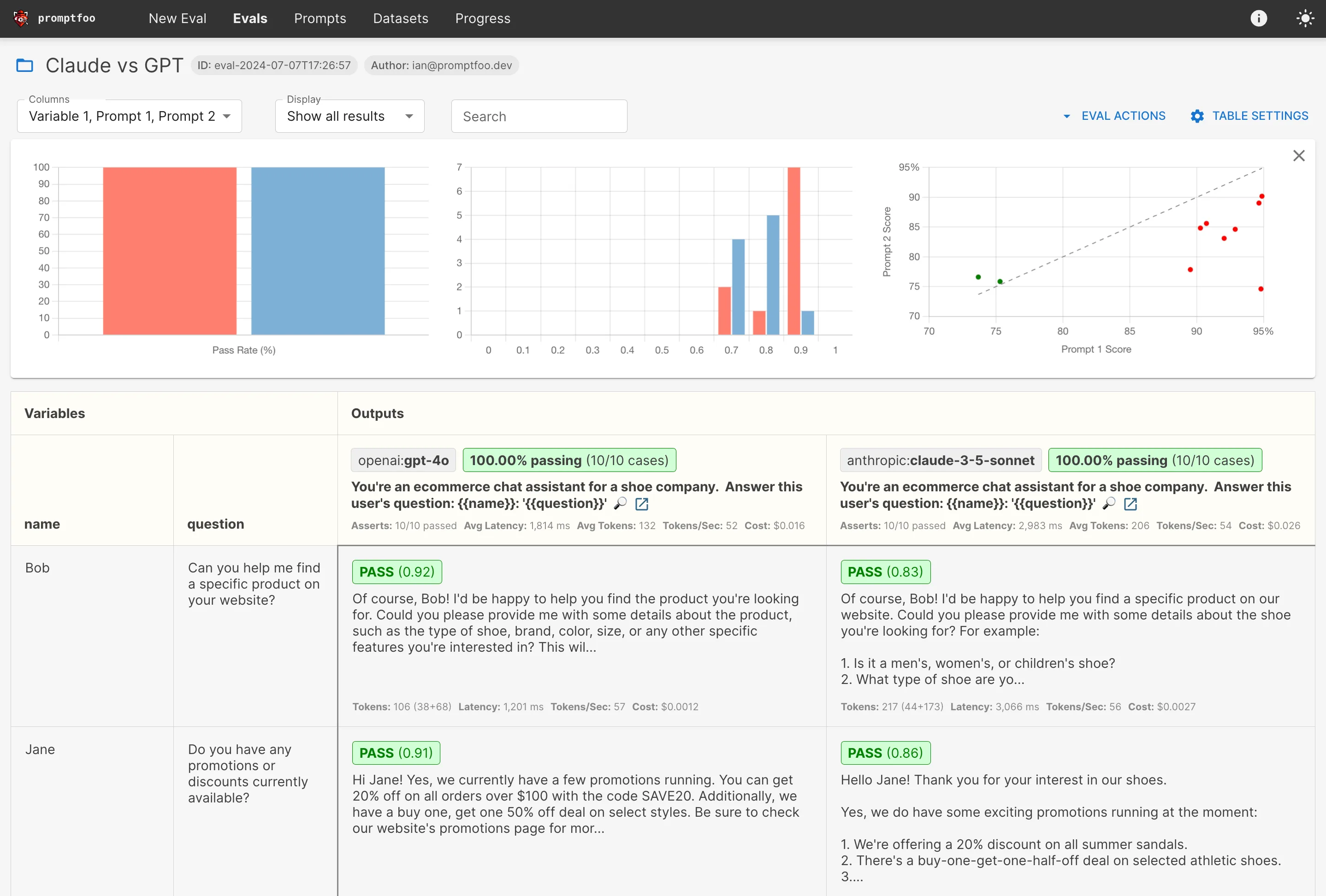

The red team module generates adversarial test cases targeting your specific application. You describe your application’s purpose, and Promptfoo generates attacks tuned to your use case.

Attack categories cover both model-layer and application-layer vulnerabilities:

- Model layer — Prompt injection , jailbreaks, hate/bias/toxicity, hallucination, copyright, PII extraction from training data

- Application layer — Indirect prompt injection, PII leaks from RAG context, tool-based exploits (unauthorized data access, privilege escalation), data exfiltration, hijacking

Guardrails and code scanning

Promptfoo also has real-time guardrails that block jailbreaks and adversarial attacks in production. The code scanning feature flags LLM-related security and compliance issues in pull requests, working in both IDE and CI/CD environments.

MCP Proxy

The MCP Proxy sits between your application and MCP servers, filtering and monitoring Model Context Protocol communications.

Note: OpenAI has described Promptfoo as "really powerful" for iterating on prompts. Anthropic noted it offers "a streamlined, out-of-the-box solution" for prompt testing. Both are listed as users on the Promptfoo website.

Getting Started

npx promptfoo@latest init to install and create a starter config. Also available via npm install -g promptfoo, pip install promptfoo, or brew install promptfoo.promptfooconfig.yaml to define your prompts, providers, and test cases with assertions.npx promptfoo eval to run tests against your configured providers. Results are cached for fast reruns.npx promptfoo view to open the web UI with side-by-side comparison, pass/fail rates, and detailed output inspection.Basic evaluation config

A promptfooconfig.yaml defines prompts, providers, and test cases:

prompts:

- "You are a helpful assistant. Answer this question: {{question}}"

- "As an expert, provide a detailed answer to: {{question}}"

providers:

- openai:gpt-4

- anthropic:messages:claude-3-sonnet-20240229

tests:

- vars:

question: "What is the capital of France?"

assert:

- type: contains

value: "Paris"

- vars:

question: "Explain quantum computing in simple terms"

assert:

- type: llm-rubric

value: "The response should be understandable by a non-technical person"

Run it:

npx promptfoo eval

Red teaming setup

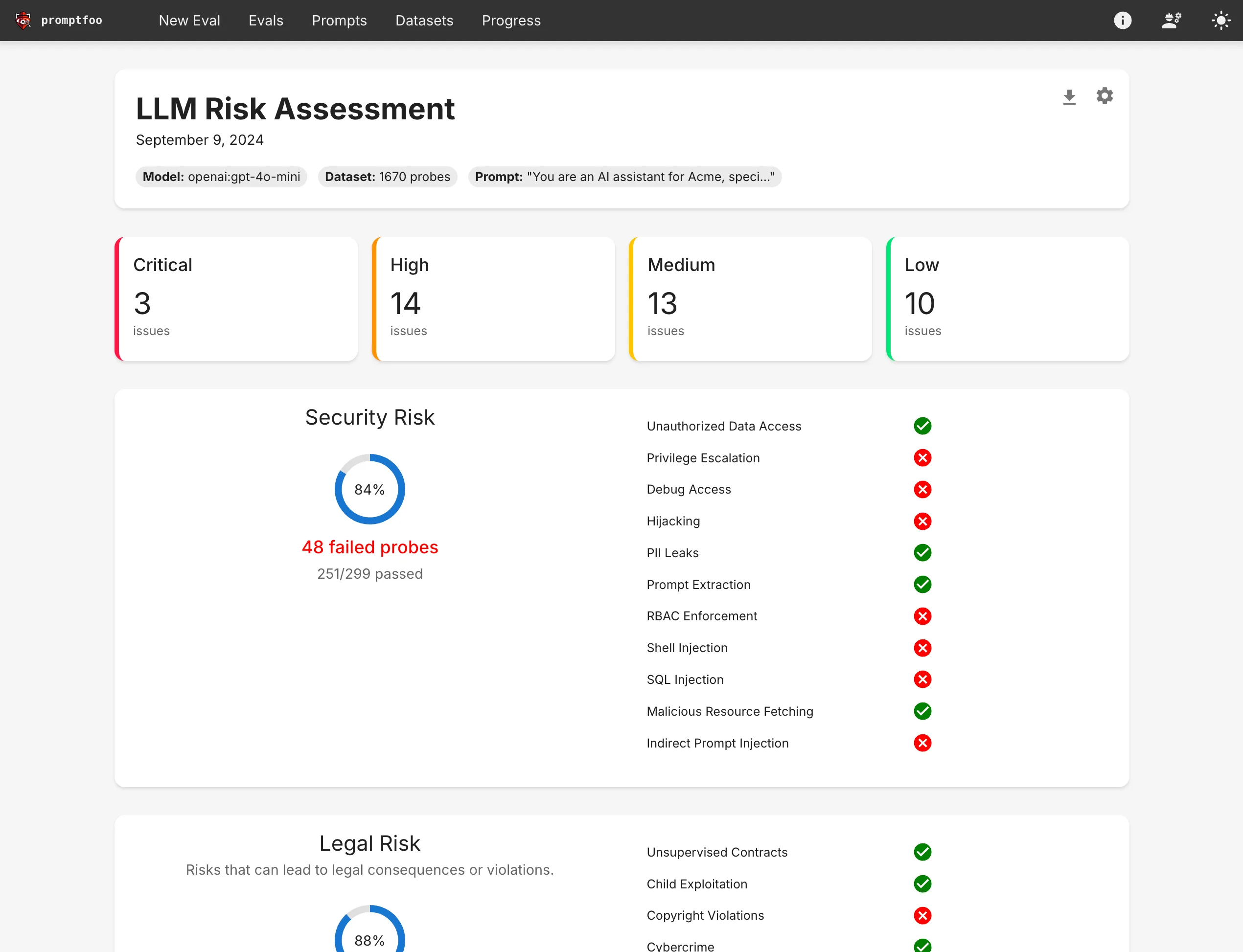

Set up a red team scan with the interactive wizard:

npx promptfoo@latest redteam init

This walks you through providing your application’s purpose, configuring the target endpoint, selecting vulnerability plugins, and choosing attack strategies. It generates a promptfooconfig.yaml tuned for red teaming.

Run the scan:

npx promptfoo redteam run

View the risk report:

npx promptfoo redteam report

API endpoint targeting

To red team a deployed API:

targets:

- id: https

label: 'travel-agent'

config:

url: 'https://example.com/generate'

method: 'POST'

headers:

'Content-Type': 'application/json'

body:

myPrompt: '{{prompt}}'

purpose: 'The user is a budget traveler looking for the best deals.'

When to use Promptfoo

Promptfoo packs evaluation, red teaming, guardrails, and code scanning into one tool. It’s a good fit when you need more than security testing alone — when you also want to compare prompts, benchmark models, and run regression tests as part of your development workflow.

The YAML-based configuration and CLI design make it straightforward to add LLM testing to CI/CD pipelines. The web UI is useful for teams reviewing results together.

For teams that only need adversarial security scanning, dedicated tools like Garak or DeepTeam go deeper on attack techniques. For Microsoft’s orchestrated red teaming, see PyRIT .

For runtime-only protection without the testing framework, look at Lakera Guard , LLM Guard , or NeMo Guardrails .

Pro tip: Development teams building LLM applications who want evaluation, red teaming, and CI/CD integration in a single tool with broad provider support.