Knostic is the first AI security platform built to enforce need-to-know access controls for enterprise large language models, preventing AI tools like Microsoft 365 Copilot, Glean, and Google Gemini from oversharing sensitive corporate data to unauthorized users.

Founded in 2023 by cybersecurity veterans Gadi Evron (former CISO of the Israeli National Digital Authority) and Sounil Yu (creator of the Cyber Defense Matrix), Knostic addresses a problem unique to the enterprise AI era: LLM-based assistants connect to vast corporate data stores and can surface salary data, M&A details, or strategy documents to anyone who asks the right question. Unlike traditional data-level access controls, Knostic enforces policies at the AI inference layer.

Knostic has raised $19.3 million in total funding, including $11 million in March 2025. It is the only startup to win both the RSA Conference 2024 Launch Pad and the Black Hat 2024 Startup Spotlight competitions.

It was also named a Top 10 Finalist in the RSAC 2025 Innovation Sandbox Contest, receiving a $5 million investment from Crosspoint Capital Partners.

Key Features at a Glance

| Feature | Details |

|---|---|

| Need-to-Know Enforcement | Inference-time access policies that control what each LLM response reveals based on user role and context |

| Oversharing Detection | Pre-deployment query simulation that discovers AI data leakage paths before real users hit them |

| Microsoft 365 Copilot | Monitors and controls Copilot access to Teams, SharePoint, OneDrive, and other M365 sources |

| Glean & Gemini | Detects oversharing across Glean enterprise search and Google Gemini Workspace deployments |

| Custom LLM Coverage | Protects internally built AI applications and chatbots with custom policy enforcement |

| Dynamic Redaction | Removes sensitive elements from AI responses while keeping the rest useful |

| Audit Trails | Full logging of queries, responses, and data sources for compliance and regulatory reviews |

| Continuous Monitoring | Re-tests as data sources change, new documents are added, and permissions shift |

Overview

Traditional access controls work at the data level, restricting who can open which files or folders. But enterprise LLMs operate at the knowledge level.

They read across thousands of documents and synthesize answers, which means an employee might not have direct access to a confidential file but can still extract its contents by asking the AI assistant a carefully worded question. Compared to data-level security tools like DLP solutions, Knostic operates one layer higher — at what it calls the “knowledge layer.”

The knowledge layer is the space between static enterprise data and AI-generated answers. Knostic is the first platform designed to secure this layer.

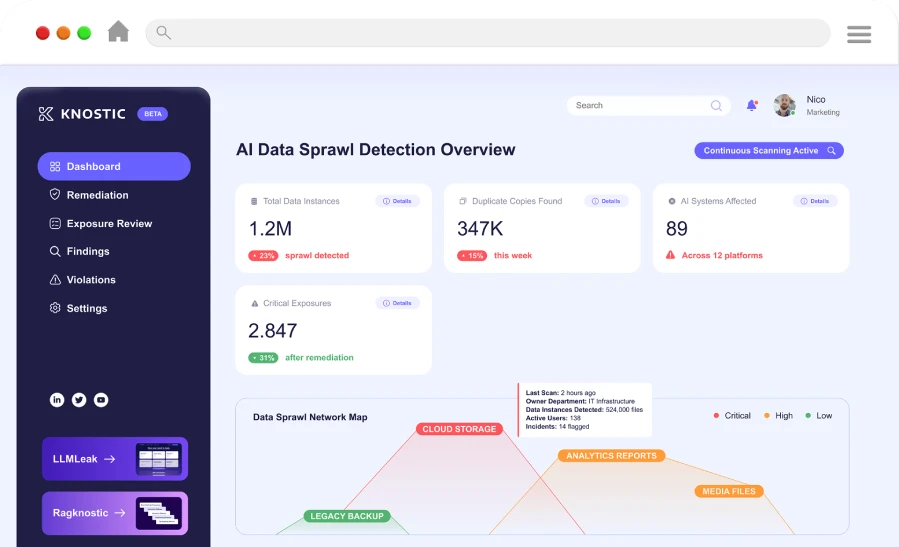

The platform works in three stages: it simulates employee queries to discover oversharing paths before they become incidents, enforces need-to-know policies at inference time, and provides audit trails showing who saw what and why.

Key Features

AI Oversharing Detection

The core challenge: an employee asks their company’s AI assistant “What are the salary ranges for the engineering team?” or “What is our M&A pipeline?” — and gets an answer they should never have seen.

Knostic addresses this through pre-deployment simulation:

- Query simulation — Runs realistic employee queries across enterprise AI assistants to discover oversharing before real users encounter it

- Prompt tracing — Traces each prompt through the system to identify which data sources contribute to responses

- Risk scoring — Ranks oversharing incidents by severity based on data sensitivity and user authorization level

- Continuous scanning — Regularly re-tests as data sources change, new documents are added, and permissions shift

Need-to-Know Policy Enforcement

Knostic enforces policies at inference time, controlling what the LLM reveals in each response:

- Role-based filtering — Applies policies based on the querying user’s role, department, and clearance level

- Context-aware decisions — Goes beyond static role matching to consider the business context of each query

- Dynamic redaction — Removes sensitive elements from AI responses while keeping the rest of the answer useful

- Policy drift detection — Monitors for changes in data access patterns that could indicate policy violations

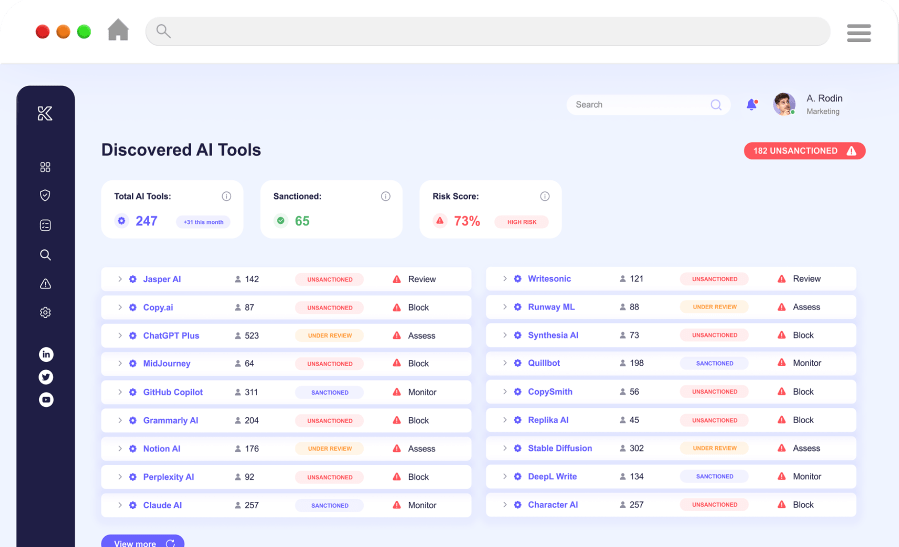

Enterprise AI Tool Coverage

Knostic provides visibility and protection across major enterprise AI platforms:

- Microsoft 365 Copilot — Monitors data access from Teams, SharePoint, OneDrive, and other M365 sources

- Glean — Detects oversharing in Glean’s enterprise search and assistant

- Google Gemini — Covers Gemini deployments within Google Workspace

- Custom LLM deployments — Protects internally built AI applications and chatbots

Compliance and Auditing

Every AI interaction generates an audit record:

- Full logging of queries, responses, and contributing data sources

- “Who saw what, why” tracking for compliance requirements

- Exportable audit trails for regulatory reviews

- Integration with existing GRC and compliance workflows

Use Cases

Microsoft 365 Copilot rollouts — Before enabling Copilot for all employees, Knostic simulates queries to discover what sensitive data Copilot can surface and to whom.

Regulated industries — Financial services, healthcare, and government organizations need to prove their AI deployments enforce data access policies and maintain audit trails.

M&A and sensitive operations — Companies running acquisitions, restructurings, or other confidential processes need to ensure AI assistants do not leak deal details to unauthorized employees.

AI governance programs — Security teams building AI governance frameworks use Knostic to enforce need-to-know controls as a base layer.

Strengths & Limitations

Strengths:

- Addresses a problem no traditional security tool covers: the gap between data access controls and AI-generated knowledge

- Pre-deployment simulation finds oversharing risks before real users are affected

- Founded by recognized cybersecurity leaders with deep enterprise experience

- Covers multiple enterprise AI platforms (Copilot, Glean, Gemini, custom LLMs)

- Inference-time enforcement means policies apply even when underlying data permissions are misconfigured

Limitations:

- Young company (founded 2023) still building out its platform and customer base

- Focused specifically on enterprise AI oversharing, not a general-purpose AI security tool

- Requires integration with each AI platform for full coverage

- Effectiveness depends on accurate role and policy definitions; garbage-in-garbage-out applies

Getting Started

Knostic pricing

Knostic does not publish a public pricing page. The platform is sold through enterprise sales with quotes scoped to deployment size, the AI tools in scope (Microsoft 365 Copilot, Glean, Gemini, custom LLMs), and how many users and data sources sit behind each integration. There is no advertised free or self-serve tier.

I do not publish dollar amounts for sales-gated tools. To get a quote, request a demo from knostic.ai and prepare to share the AI assistants you operate, target user volume, integration depth (read-only audit vs inference-time policy enforcement), and any compliance frameworks (SOC 2, ISO 27001, regulated-industry rules) the deployment must support.

How Knostic Compares

Knostic focuses specifically on need-to-know access control for enterprise LLMs. Unlike Lakera Guard or LLM Guard, which focus on runtime prompt injection detection and content filtering, Knostic targets the data oversharing problem that emerges when LLMs connect to corporate knowledge bases.

Compared to LLM red teaming tools like Garak, Promptfoo, or PyRIT that test for vulnerabilities before deployment, Knostic provides continuous enforcement during production use. For custom guardrail logic in LLM applications, explore NeMo Guardrails.

For broader AI model security covering adversarial attacks and model scanning, consider HiddenLayer or Protect AI Guardian. For AI observability and monitoring, see Arthur AI or CalypsoAI.

For a broader overview of AI security threats and tools, see the AI security tools category page.