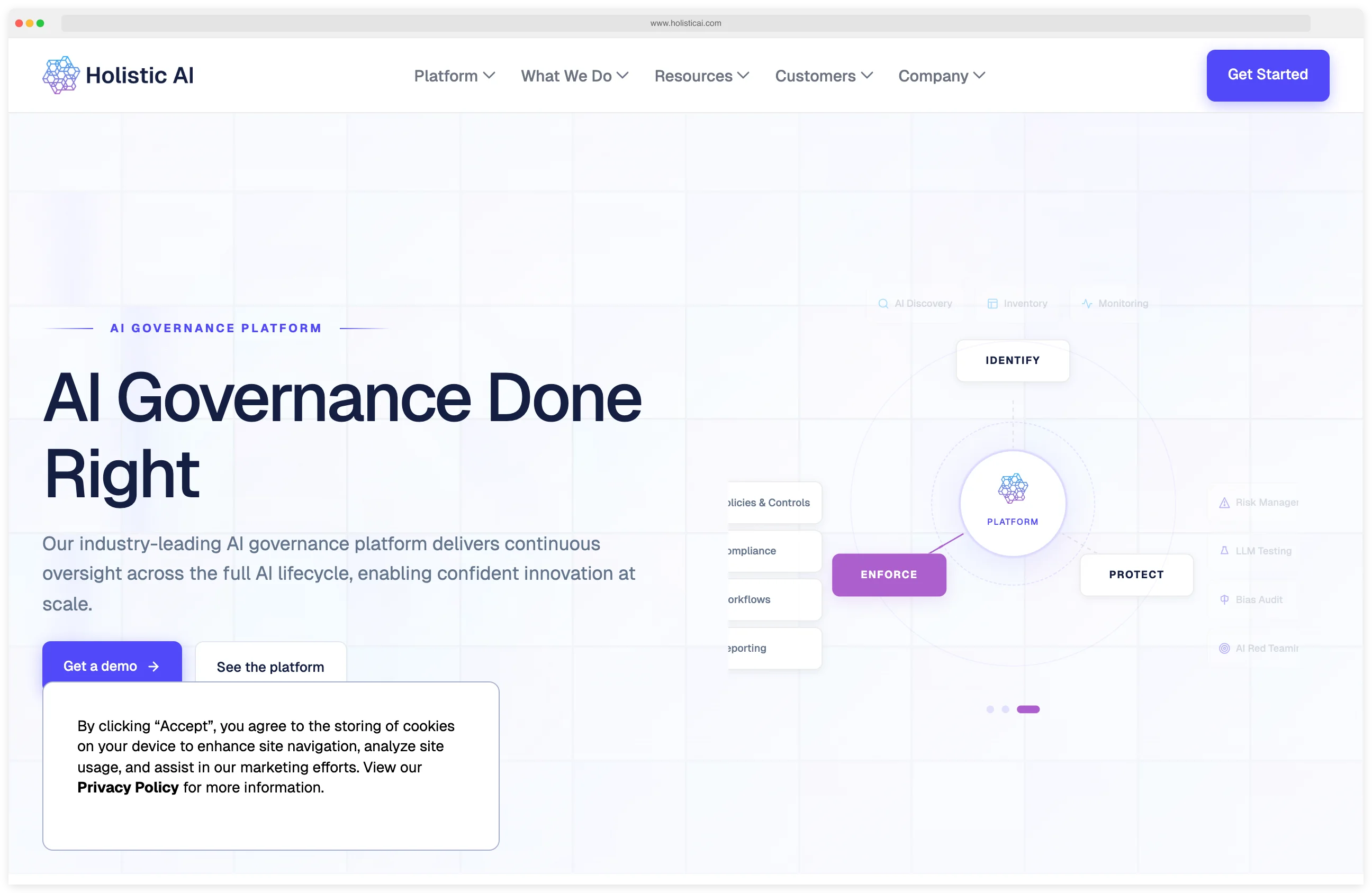

Holistic AI is an enterprise AI governance platform that unifies AI system discovery, automated testing, continuous monitoring, and regulatory compliance into a single system.

The platform provides 100+ automated tests — covering red teaming, bias detection, hallucination testing, and adversarial probing — with built-in compliance mapping to the EU AI Act, NIST AI RMF, and ISO 42001. It is listed in the AI security category.

The platform addresses a real challenge: as AI adoption grows, organizations struggle to keep track of all their AI systems, test them consistently, and prove compliance with shifting regulations.

What is Holistic AI?

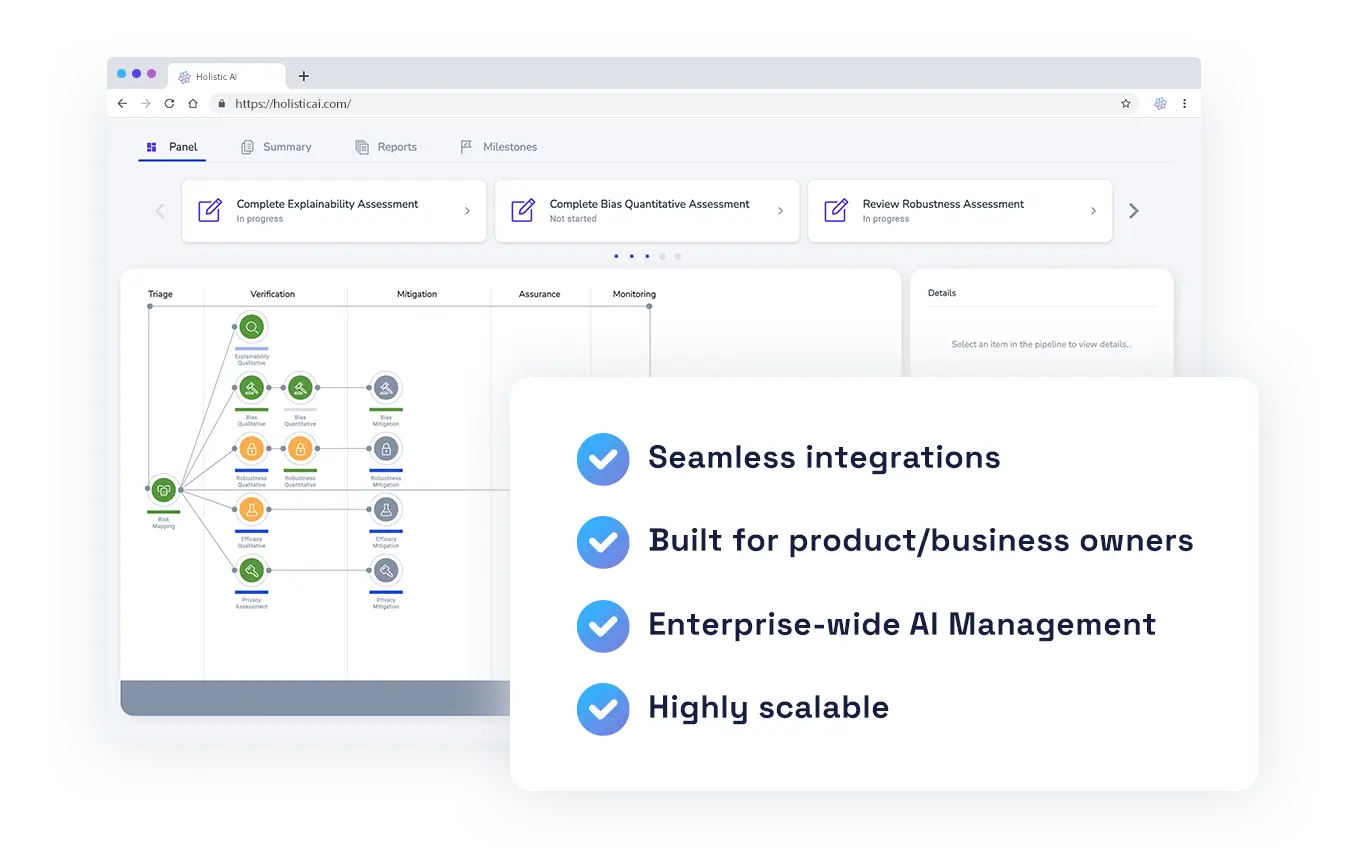

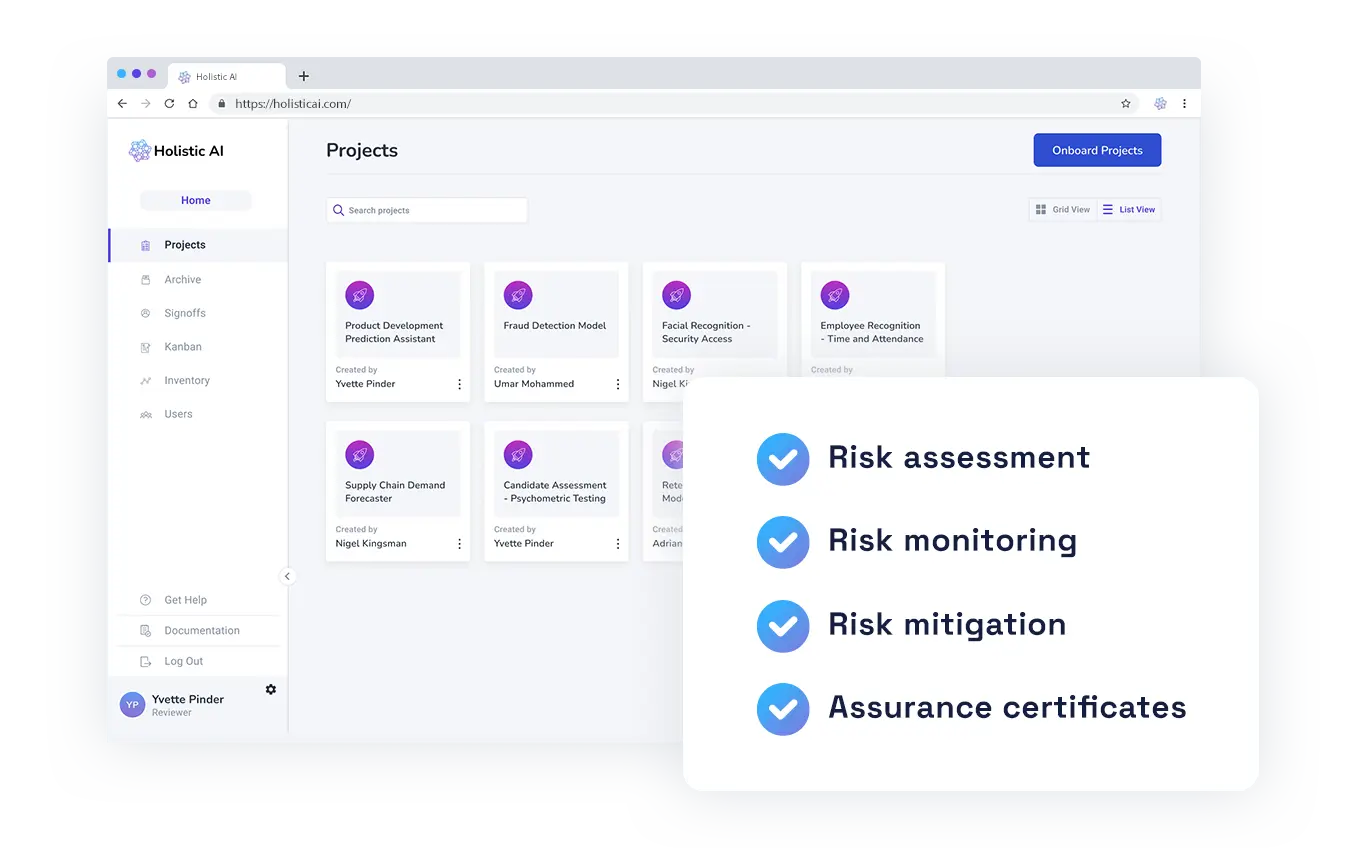

Holistic AI operates across four stages of AI governance: Connect (integrate with existing tech environments), Identify (maintain a continuously updated AI inventory), Protect (enforce guardrails through automated testing and monitoring), and Enforce (align AI initiatives with business priorities and regulatory requirements).

The AI discovery engine is often the starting point. Many organizations do not have a complete inventory of their AI systems — shadow AI, unclear ownership, and unmanaged data flows create blind spots. Holistic AI automatically identifies and catalogs these systems, giving all subsequent governance activities a solid foundation.

What are Holistic AI’s key features?

| Feature | Details |

|---|---|

| AI Discovery | Shadow AI detection, ownership mapping, data flow analysis |

| Test Suite | 100+ automated tests across safety, bias, security, privacy, robustness |

| Red Teaming | Dynamic adversarial prompts with static and dynamic test generation |

| Bias Detection | Fairness testing across demographic subgroups with quantified metrics |

| Hallucination Testing | Detects factual inaccuracies, fabrications, and inconsistencies |

| Safety Metric | Defense Success Rate (DSR) — proportion of safe responses to total evaluated |

| Compliance | EU AI Act, NIST AI RMF, ISO 42001 mapping with automated evidence |

| Policy Enforcement | Policy-as-code with deployment gates, approvals, kill switches, guardian agents |

| Monitoring | Continuous drift detection, risk intelligence, workflow tracing |

| Audit Trail | Automated evidence logs and compliance reporting |

Red Teaming and Adversarial Testing

Holistic AI’s red teaming goes beyond static test suites. The platform supplements fixed adversarial prompts with dynamically generated prompts based on specified keywords, topics, and themes. These generated prompts simulate edge cases, biases, misinformation, and adversarial inputs to find vulnerabilities.

The platform tests four categories of prompts against the target LLM and classifies each response as SAFE or UNSAFE. For every classification, it provides an explanation of why the response was flagged and actionable insights on how to improve the model’s Defense Success Rate relative to other foundation models.

This approach means red teaming is not a one-time exercise but a continuous process that adapts to new threat patterns and evolving model behavior.

Compliance and Policy Enforcement

The compliance engine maps AI risk assessments to specific regulatory requirements. For the EU AI Act, there is a risk calculator that classifies AI systems by risk level, an automated readiness assessment, and continuous monitoring against the regulation’s requirements — particularly relevant as high-risk requirements take effect from August 2026.

Policy enforcement uses a policy-as-code approach: organizations define rules aligned with internal standards and chosen regulations, and the platform enforces them through deployment gates (block non-compliant systems from production), approval workflows, kill switches for emergency shutdowns, and guardian agents for runtime guardrails.

AI Discovery

The discovery engine tackles a basic governance problem: you cannot govern what you cannot see. Many organizations have AI systems deployed across teams and departments without centralized visibility. The discovery function scans the technology environment to identify AI systems, map their ownership, catalog data flows, and classify risk levels.

This inventory becomes the foundation for all governance activities — testing, monitoring, and compliance reporting all reference back to the central AI inventory.

How do I get started with Holistic AI?

How much does Holistic AI cost?

Holistic AI does not publish a public pricing page. The platform uses custom enterprise quotes scoped to deployment size, the number of AI systems under management, and the compliance frameworks (EU AI Act, NIST AI RMF, ISO/IEC 42001) the buyer needs to map against. There is no advertised free or self-serve tier.

I do not publish dollar amounts for sales-gated tools (see the pricing rule in our methodology ). To get a quote, request a demo from holisticai.com and prepare to share AI inventory size, target frameworks, and required modules (discovery, testing, monitoring, policy-as-code). Procurement timelines for enterprise governance platforms typically run weeks rather than days.

When to Use Holistic AI

Holistic AI is built for organizations that need to govern AI at scale — not just secure individual models, but keep visibility, compliance, and control across an entire AI portfolio.

It is particularly relevant for organizations preparing for EU AI Act compliance (high-risk requirements from August 2026), enterprises with distributed AI adoption where shadow AI and unclear ownership create governance gaps, and regulated industries (financial services, healthcare, public sector) that need audit-ready evidence of AI governance.

How Holistic AI Compares

Holistic AI occupies the governance and compliance layer of the AI security landscape. It is broader than tools focused solely on prompt injection or LLM firewalling, and more compliance-oriented than pure observability platforms.

For model-level observability with bias detection and explainability, see Arthur AI . For runtime prompt injection defense, consider Lakera Guard or Prompt Security .

For open-source LLM vulnerability scanning and red teaming, look at Garak , Augustus , or DeepTeam . For AI red teaming specifically, see Mindgard .

For a broader overview of AI security tools, see the AI security tools category page.