HiddenLayer AISec is an enterprise AI security platform built specifically for ML model security — covering supply chain scanning, runtime defense, and adversarial red teaming across the full model lifecycle, from training to production deployment.

Unlike traditional cybersecurity tools, which were designed for code and infrastructure, HiddenLayer operates on model artifacts and inference behaviors without requiring access to weights, training data, or prompts.

HiddenLayer was co-founded in 2022 by Chris “Tito” Sestito, Tanner Burns, and James Ballard. Sestito spent years leading threat research at Cylance, where attackers exploited the company’s Windows executable AI model using an inference attack. That breach — which let binary files evade detection across Cylance’s entire customer base — became the founding motivation.

In September 2023, HiddenLayer raised $50M in Series A funding led by M12 (Microsoft’s Venture Fund) and Moore Strategic Ventures, with participation from Booz Allen Ventures, IBM Ventures, and Capital One Ventures.

What is HiddenLayer?

HiddenLayer AISec is an ML security platform designed for the threats that traditional cybersecurity tools cannot address — adversarial attacks on model inference, backdoors embedded in model weights, and supply chain compromise through poisoned model artifacts. The AISec Platform 2.0, unveiled in April 2025 ahead of RSAC, covers four areas: AI discovery, supply chain security, runtime defense, and attack simulation.

The platform is model-agnostic and agentless — no access to model weights, training data, or prompts required. HiddenLayer’s research team has disclosed 48+ CVEs in ML frameworks such as PyTorch and TensorFlow, and holds 25 granted patents (with 56 pending) in adversarial detection, model protection, and AI threat analysis.

What are HiddenLayer AISec’s key features?

| Feature | Details |

|---|---|

| ModelScanner | Scans 35+ formats (PyTorch, TensorFlow, ONNX, Keras, GGUF, pickle, safetensors) for malware, tampering, and backdoors |

| AI Discovery | Shadow AI detection across cloud and on-prem environments |

| Runtime Defense | Blocks adversarial attacks, prompt injection, and inference manipulation in real time |

| Attack Simulation | Continuous adversarial testing aligned with MITRE ATLAS |

| Model Genealogy | Tracks model lineage — training, fine-tuning, and modification history |

| AIBOM | Auto-generated AI Bill of Materials for every scanned model (components, datasets, dependencies) |

| Threat Intelligence | Aggregates data from Hugging Face and community sources to surface emerging ML security risks |

| Compliance | Aligns with NIST AI RMF , MITRE ATLAS, ISO 42001, EU AI Act |

ModelScanner

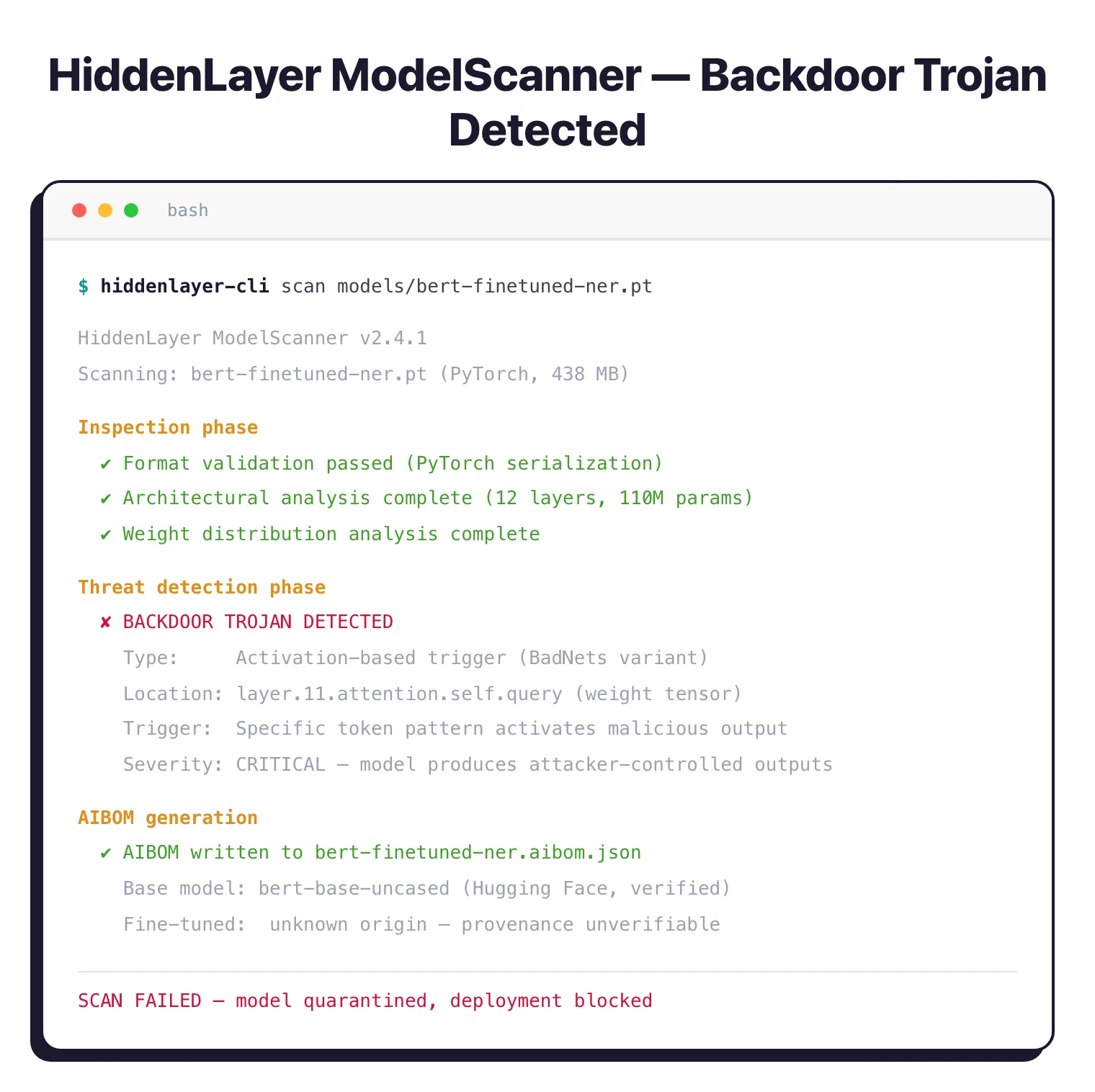

ModelScanner is HiddenLayer’s pre-production ML model scanner. It analyzes 35+ model formats — including PyTorch, TensorFlow, ONNX, Keras, GGUF, pickle, and safetensors — for malicious code injections, pickle deserialization exploits, and architectural backdoors (trojans embedded in model weights). Traditional antivirus and SCA tools do not inspect model artifacts, so ModelScanner fills a gap in the supply chain security stack that no conventional tool addresses.

The scanner integrates into CI/CD pipelines and MLOps platforms via lightweight containers, and works with registries including Hugging Face, MLflow, SageMaker, and Databricks Unity Catalog.

Model Genealogy and AIBOM

An AIBOM (AI Bill of Materials) is the ML equivalent of a software SBOM: a machine-readable inventory of a model’s components, training datasets, fine-tuning history, and dependencies. HiddenLayer auto-generates an AIBOM for every scanned model, giving security and compliance teams a traceable record for supply chain risk assessment and licensing enforcement.

Model Genealogy, introduced in AISec Platform 2.0, extends this further by tracking how a model was trained, fine-tuned, and modified over time — providing the audit trail evidence regulators and governance frameworks such as ISO 42001 and the EU AI Act require.

Attack Simulation

The red teaming engine runs continuous adversarial testing aligned with the MITRE ATLAS framework, probing models for weaknesses in robustness, data handling, and system integration before attackers find them.

Platform integrations

HiddenLayer integrates with major MLOps and cloud platforms:

- AWS — SageMaker model registry integration

- Databricks — Unity Catalog scanning

- Hugging Face — Continuous monitoring of model repositories

- MLflow — Automatic scanning of registered models

- Microsoft Azure — Available on Azure Marketplace

- CrowdStrike — Listed on CrowdStrike Marketplace

How do I get started with HiddenLayer AISec?

HiddenLayer pricing

HiddenLayer does not publish a public pricing page. The AISec Platform is sold through enterprise sales with quotes scoped to model count, deployment size, and which modules (Discovery, ModelScanner, Runtime Security, Attack Simulation) the buyer needs. Listings on Azure Marketplace and CrowdStrike Marketplace route through the same enterprise procurement.

I do not publish dollar amounts for sales-gated tools. To get a quote, request a demo from hiddenlayer.com or start the procurement flow on Azure Marketplace. Be ready to share the number of models in scope, target environments (cloud, on-prem, hybrid), and which compliance frameworks (NIST AI RMF, ISO/IEC 42001, EU AI Act) drive the requirements.

When to use HiddenLayer

HiddenLayer fits enterprises that rely on ML models for business-critical applications and need security controls that traditional tools don’t provide. It’s a good match for organizations pulling models from public repositories like Hugging Face, running customer-facing AI, or operating in regulated industries where AI governance is a hard requirement.

HiddenLayer alternatives

HiddenLayer’s wedge is the model-artifact and runtime-inference layer — scanning ML model files, defending production inference, and red-teaming with MITRE ATLAS coverage. When the threat model points elsewhere, these are the closest alternatives:

- Protect AI Guardian — Built on the open-source ModelScan project, now part of Palo Alto Networks (acquired 2025) and integrated into the Prisma AIRS portfolio. Better when an open-source scanner core or Palo Alto procurement is preferred.

- Lakera Guard — A managed prompt-injection and PII protection API, now operated by Cisco (acquisition completed 2025). Pick Lakera when the wedge is runtime LLM input/output filtering rather than model-file scanning.

- Garak — NVIDIA’s open-source LLM vulnerability scanner with 50+ probes. Use Garak for offline red teaming of LLM endpoints when you do not need ModelScanner’s binary-artifact analysis.

- Holistic AI — Governance-and-compliance-first platform with EU AI Act, NIST AI RMF, and ISO/IEC 42001 mapping. Pick Holistic AI when audit-ready evidence and AI inventory matter more than runtime inference defense.

- Mindgard — A continuous AI red teaming platform with strong MITRE ATLAS alignment. Better when ongoing offensive testing is the headline rather than a unified discovery-scan-runtime stack.

For a wider catalog, the AI security tools hub groups these by sub-category (model scanning, runtime defense, governance, red teaming).