Guardrails AI is an open-source Python framework for validating LLM inputs and outputs using composable validators from Guardrails Hub, covering risks like toxicity, PII leaks, hallucinations, and bias. It is listed in the AI security category with 6.6k GitHub stars and 561 forks.

The framework is Apache 2.0 licensed and maintained by the Guardrails AI team. The latest release is v0.9.2 (March 2026). The project also offers a partnership course with Andrew Ng on building production-ready, failure-resistant AI applications.

Guardrails AI follows a dual model: a free open-source core for self-hosting, and Guardrails Pro as a commercial managed service with hosted validation, observability, and enterprise support. The project has recently evolved with a new product called Snowglobe for synthetic data generation and dynamic evaluation datasets.

What is Guardrails AI?

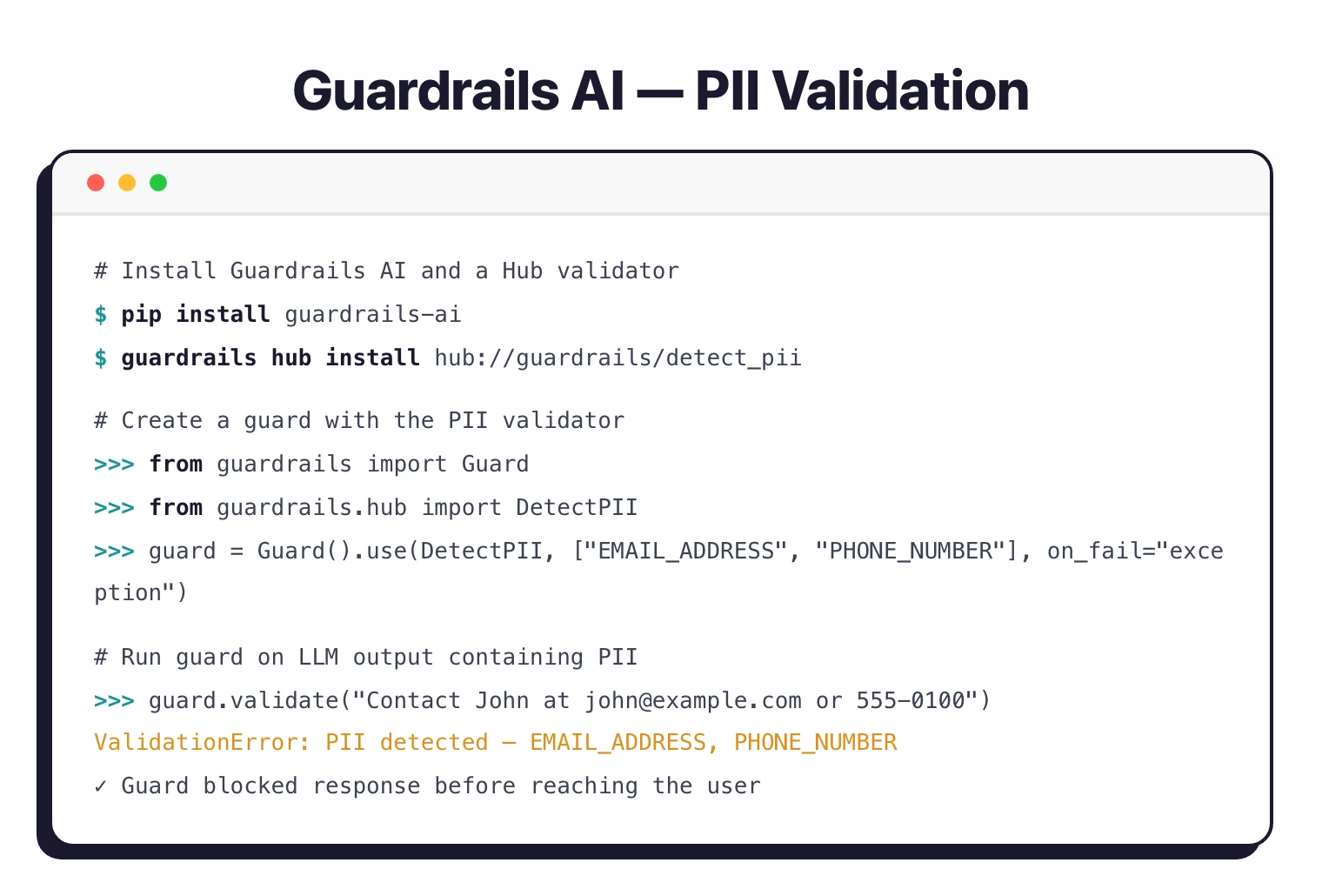

Guardrails AI intercepts LLM inputs and outputs, running configurable validation checks called “validators” to catch risks before they reach users. The design principle is composability — individual validators handle specific risks, and multiple validators combine into guards that run checks together.

The Guardrails Hub is the validator library. It contains pre-built validators covering toxicity detection, PII anonymization, hallucination detection, profanity filtering, bias detection, logical consistency checks, and more. Each validator is independently testable and deployable, and the hub continues to grow with community contributions.

Beyond safety, the framework handles structured data generation. When you need an LLM to produce valid JSON, XML, or data matching a specific schema, Guardrails AI validates the output structure and re-prompts the model when validation fails. This cuts down on the brittle parsing code that usually surrounds LLM integrations.

What are Guardrails AI’s key features?

| Feature | Details |

|---|---|

| Core Concept | Composable validators organized into input/output guards |

| Validator Library | Guardrails Hub with pre-built validators for common risk types |

| Risk Detection | Toxicity, PII, hallucination, profanity, bias, logical consistency |

| Structured Data | JSON schema validation, function calling, prompt optimization |

| Re-prompting | Automatic re-prompting when output validation fails |

| API Server | Standalone Flask-based REST API for language-agnostic deployment |

| Multi-Validator | Compose multiple validators into a single guard for comprehensive checks |

| Observability | Guardrails Pro dashboards for monitoring validation metrics |

| Language | Python (JavaScript support available) |

| License | Apache 2.0 (open-source core) |

| GitHub | 6.6k stars, 561 forks, 3,186 commits |

Validators and guards

The building blocks are validators and guards.

A validator is a single check — does this text contain PII? Is this response toxic? Does this JSON match the expected schema?

A guard combines multiple validators into a pipeline that runs on every LLM interaction.

Guards can run on inputs (before the LLM processes the prompt), on outputs (after the LLM generates a response), or both. When a validator flags a violation, the guard can block the response, return a default value, or trigger re-prompting to get a corrected output.

Guardrails Hub

The hub is where the ecosystem lives. Each validator addresses a specific risk category, and the collection covers the most common LLM failure modes: generating toxic content, leaking PII from training data, hallucinating facts, producing biased responses, or failing to match structural requirements.

Validators from the hub are installable via pip and work with the same guard composition pattern. Community-contributed validators extend coverage to domain-specific risks.

Structured data generation

A practical problem Guardrails AI solves: getting LLMs to produce valid structured data. Instead of writing brittle regex parsers or hoping the model follows instructions, the framework validates output against schemas and re-prompts automatically when the structure is wrong.

This cuts the “parsing tax” in LLM applications where developers spend too much time handling malformed model outputs.

How do I get started with Guardrails AI?

pip install guardrails-ai. The core library requires Python 3.9+. For JavaScript applications, a JS client is also available.When to use Guardrails AI

Guardrails AI fits teams that want modular, composable validation for LLM applications without being locked into a specific LLM provider or deployment model. The open-source core means you can self-host everything, inspect the code, and contribute validators back to the community.

The framework is particularly good for applications that need structured output validation. If your LLM needs to produce JSON, fill forms, or generate data matching specific schemas, the built-in re-prompting logic handles the reliability work that would otherwise require custom retry code.

For teams that want to start simple and scale, the path from a single validator to a multi-validator guard to Guardrails Pro managed service is incremental — you don’t need to rearchitect as requirements grow.

What are alternatives to Guardrails AI?

Guardrails AI’s strength is the composable open-source validator ecosystem. When that pattern is not the best fit, these are the closest alternatives:

- NeMo Guardrails — NVIDIA’s framework adds dialog-flow control via the Colang language, modeling multi-turn conversations rather than single-prompt validation. Better when conversation-level state and routing logic matter more than a wide validator catalog.

- LLM Guard — A lightweight self-hosted input/output scanner with a fixed scanner set. Pick LLM Guard when a smaller, dependency-light deployment matters more than the Hub’s pluggable model and you do not need re-prompting.

- OpenAI Guardrails — OpenAI’s built-in tripwire-pattern guardrails for the Assistants and Responses APIs. Use it when you are already locked to OpenAI infrastructure and want first-party agent guardrails rather than a portable layer.

- Lakera Guard — A commercial managed API for prompt-injection and PII protection now operated by Cisco (acquisition completed 2025). Better when you want a vendor-managed runtime endpoint instead of a self-hosted Python framework.

- Protecto — Privacy-focused PII detection and tokenization, deeper than Guardrails AI’s general PII validator. Pick Protecto when regulated-data masking is the dominant requirement.

- Arthur Shield — A hosted LLM firewall sibling that focuses on policy enforcement and runtime telemetry. Good fit when observability and incident workflow trump open-source self-hosting.

For a wider catalog, the AI security tools hub groups these by sub-category (validators, runtime guardrails, dialog control, managed APIs).