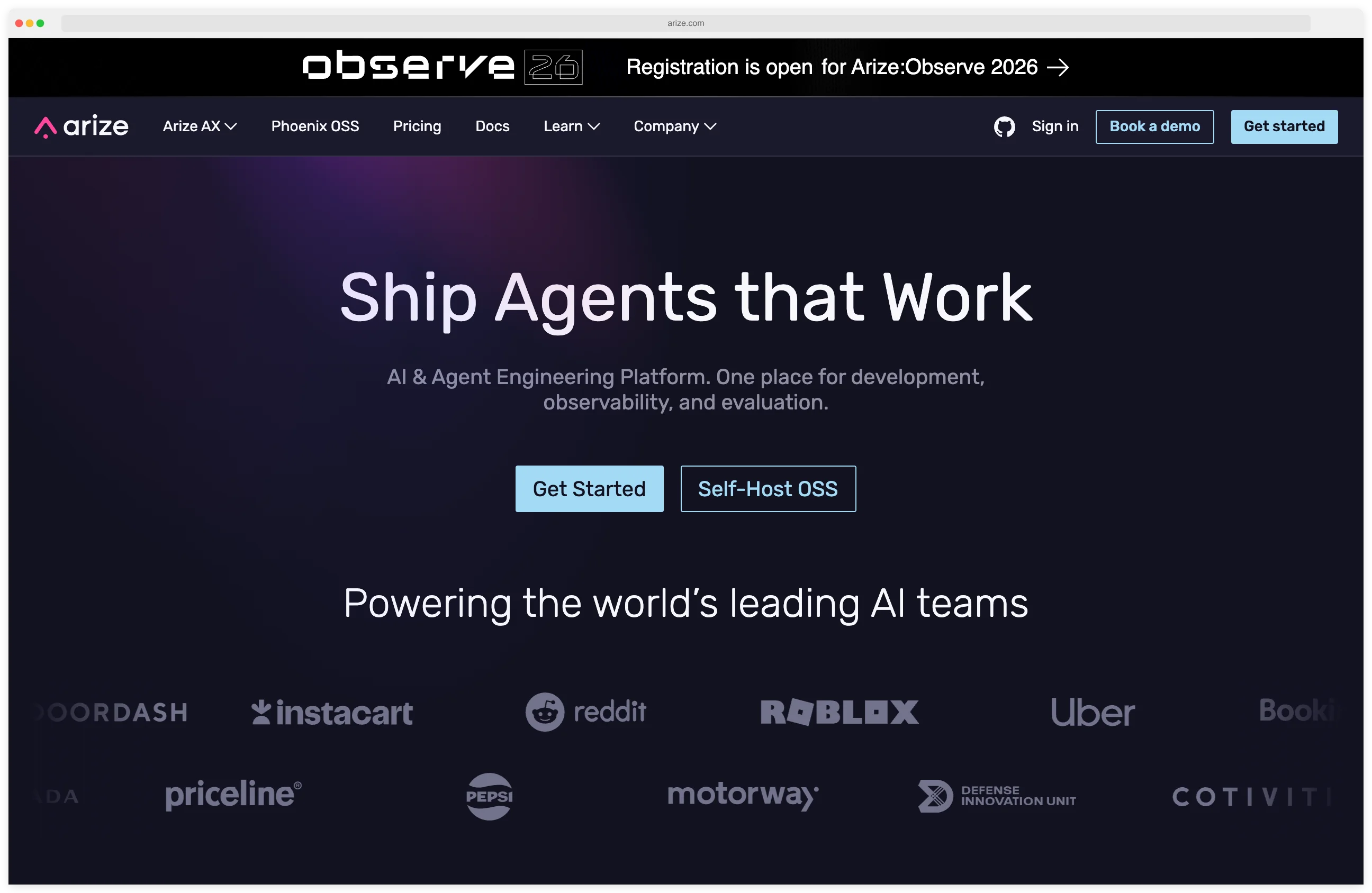

Arize AI is an AI observability and LLM evaluation platform built on OpenTelemetry standards, offering vendor-agnostic tracing from development through production. The company provides Phoenix (open-source, 9.1k+ GitHub stars) for LLM tracing and evaluation, and AX for enterprise-scale AI monitoring.

Arize processes 1 trillion spans and runs 50+ million evaluations monthly across customers including DoorDash, Instacart, Reddit, and Uber. It is listed in the AI security category.

The platform is used by organizations including DoorDash, Instacart, Reddit, Uber, Booking.com, Roblox, PagerDuty, Air Canada, Cohere, Conde Nast, Flipkart, TripAdvisor, Siemens, Microsoft, and Priceline to monitor and evaluate their AI applications.

Arize’s approach centers on open standards — OpenTelemetry for instrumentation, open-source evaluation models rather than proprietary black-box evaluators, and standard data formats that prevent vendor lock-in.

What is Arize AI?

Arize operates across two layers of the AI stack: development-time experimentation (Phoenix) and production-scale monitoring (AX). This split lets teams start with the free, open-source Phoenix for prototyping and evaluation, then move to AX when they need enterprise-grade scale and collaboration.

OpenTelemetry is central to Arize’s architecture. LLM application traces use the same standard as traditional application performance monitoring (APM), so AI observability plugs into existing DevOps infrastructure instead of requiring a separate monitoring stack.

What are Arize AI’s key features?

| Feature | Details |

|---|---|

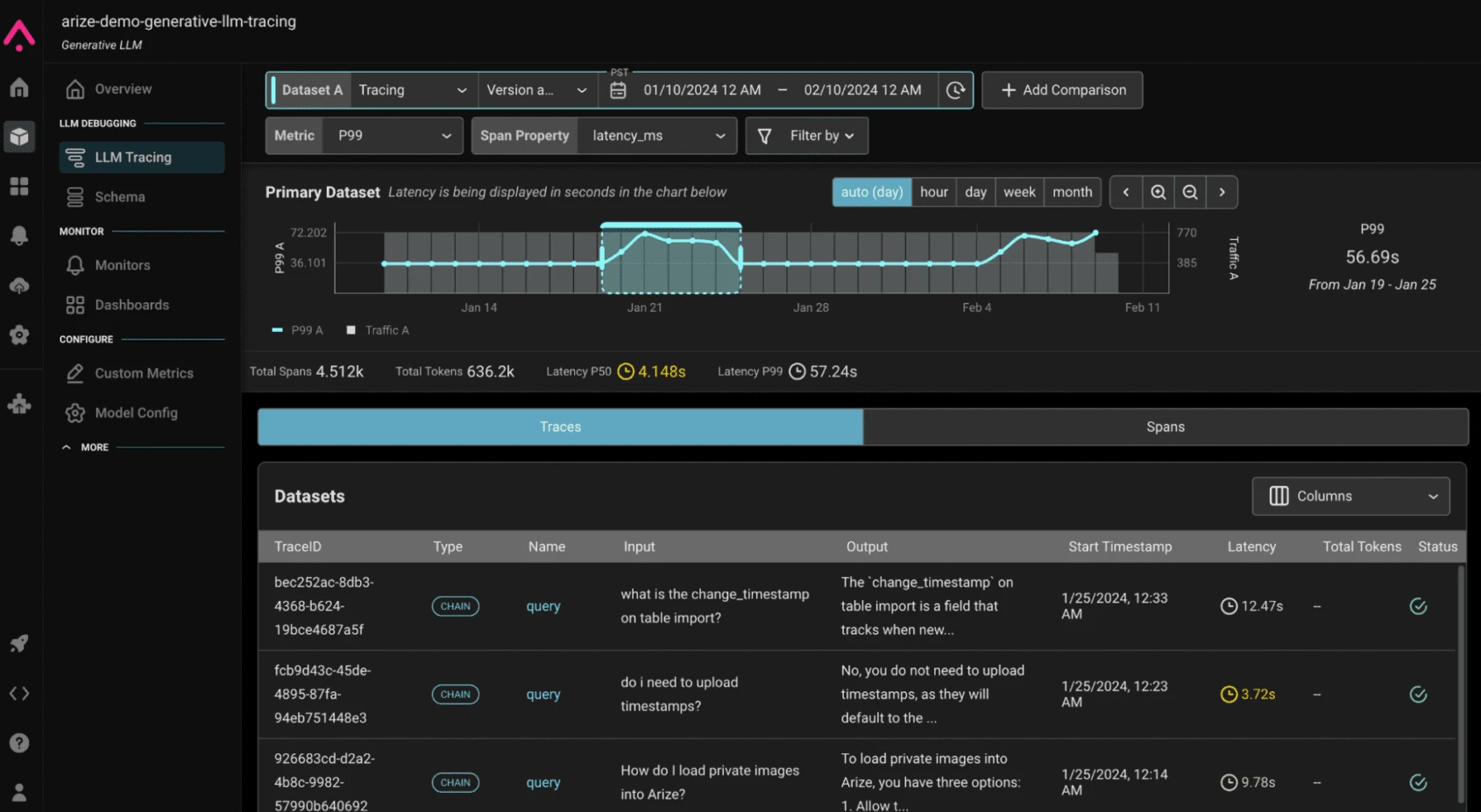

| LLM Tracing | Full-flow logging of agent interactions, tool calls, retrieval steps, and configurations |

| Evaluation | 50M+ evaluations monthly; open-source evaluation models, not proprietary black-box |

| Agent Monitoring | Track agent behavior, tool usage, decision chains, and performance in production |

| Experiment Tracking | Compare prompt variations, model changes, and parameter adjustments side-by-side |

| Prompt Management | Version control for prompts with systematic testing and rollback |

| Datasets | Versioned datasets for evaluation, experimentation, and fine-tuning |

| RAG Evaluation | Measure retrieval quality, relevance, and response grounding |

| Scale | 1 trillion spans processed, 5 million downloads monthly |

| Datastore | adb — purpose-built for generative AI with real-time ingestion and sub-second queries |

| Framework Support | OpenAI Agents SDK, Claude Agent SDK, LangGraph, Vercel AI SDK, CrewAI, LlamaIndex, DSPy, Haystack, Guardrails, Instructor, Pydantic AI, AutoGen AgentChat, Portkey, Google ADK, and 15+ more |

| License | Elastic License 2.0 (Phoenix); commercial (AX) |

Phoenix: Open-Source AI Observability

Phoenix is the open-source core of Arize’s platform. It has the same tracing, evaluation, and experimentation capabilities as the enterprise platform, with no feature gates or restrictions on the self-hosted version.

Key capabilities include:

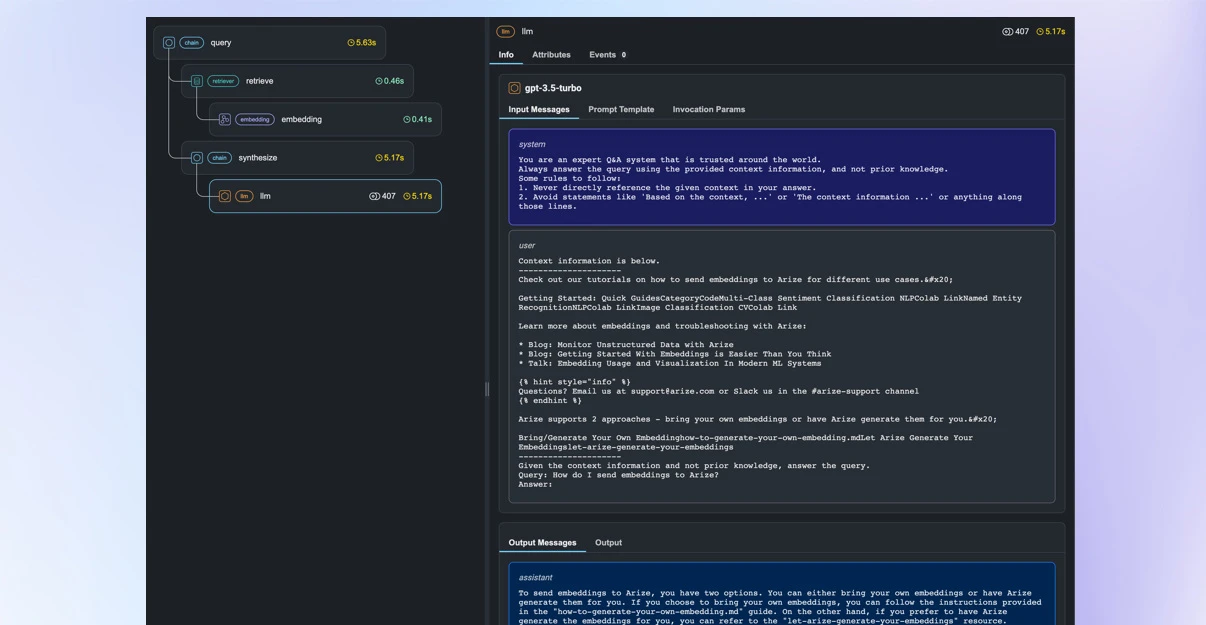

- LLM Tracing — Captures the full execution flow of LLM applications: each prompt, response, tool call, retrieval step, and agent decision. Traces follow the OpenTelemetry standard, making them compatible with existing observability infrastructure.

- Evaluation — Run evaluations using open-source models to measure response quality, relevance, hallucination rates, toxicity, and other metrics. Evaluations can run in batch (for dataset-level assessment) or continuously (for production monitoring).

- Experiment Tracking — Compare different prompt templates, model versions, temperature settings, and other parameters side-by-side to make data-driven decisions about which configuration to deploy.

- Prompt Management — Version control for prompts with the ability to test changes systematically before deployment.

- Dataset Management — Create and maintain versioned datasets for evaluation benchmarks, fine-tuning, and reproducible experiments.

Phoenix runs on local machines, in Jupyter notebooks, as Docker containers, or in cloud environments. Installation is straightforward: pip install arize-phoenix or pull the Docker image from Docker Hub.

Arize AX: Enterprise Platform

AX extends Phoenix’s capabilities to production scale with two editions:

- AX-Generative — For LLM and generative AI applications. Monitors production traffic, detects quality degradation, tracks agent behavior, and provides team collaboration features for debugging and investigation.

- AX-ML & CV — For traditional machine learning and computer vision workloads. Extends observability beyond LLMs to cover the full spectrum of AI models.

Both editions are built on adb, Arize’s purpose-built datastore optimized for generative AI workloads. It handles real-time ingestion of trace data and provides sub-second query performance for debugging and analysis at scale.

Alyx: AI Debugging Assistant

Alyx is Arize’s AI assistant for LLM application development. It helps debug traces, spot failure patterns, and integrate domain knowledge into the development workflow. Alyx works alongside Phoenix and AX to speed up investigation and root cause analysis.

Framework Integrations

Arize provides instrumentation for major AI frameworks and SDKs:

- Agent frameworks — OpenAI Agents SDK, Claude Agent SDK, LangGraph, CrewAI, AutoGen AgentChat, Pydantic AI, Google ADK

- LLM frameworks — LlamaIndex, DSPy, Vercel AI SDK, Haystack, Guardrails, Instructor, Portkey

- LLM providers — OpenAI, Anthropic, Google GenAI, AWS Bedrock, Mistral AI, Groq, OpenRouter, LiteLLM, VertexAI, and more

- Deployment — Kubernetes, Docker, Jupyter notebooks, local machines, cloud-native environments

The OpenInference project (also open-source from Arize) provides the OpenTelemetry instrumentation packages that connect these frameworks to Phoenix or AX.

How do I get started with Arize AI?

pip install arize-phoenix. Or pull the Docker image for containerized deployment. No license key or account required.pip install openinference-instrumentation-openai (or the package for your framework). This captures traces automatically.python -m phoenix.server.main serve. The web UI opens at localhost:6006 with trace visualization, evaluation tools, and experiment tracking.When to Use Arize AI

Arize AI is built for teams that need observability across the full AI application lifecycle — from prototyping and evaluation through production monitoring. The open-source Phoenix makes it accessible to individual developers and small teams, while AX scales to enterprise deployments.

It is particularly useful when you are building agent-based applications that need full-flow tracing of decision chains, running evaluations at scale to compare models and prompts, or working in environments where vendor lock-in is a concern and OpenTelemetry compatibility matters.

How Arize AI Compares

Arize AI occupies the observability and evaluation layer of the AI security landscape. The closest direct alternatives split by what they prioritize at the observability layer:

- Arthur AI — Multi-model monitoring with bias detection and explainability across LLMs and traditional ML. A fit when responsible-AI metrics (fairness, drift, explainability) carry as much weight as tracing.

- WhyLabs — Privacy-preserving statistical profiling for ML and LLM monitoring. WhyLabs was acquired by Apple in January 2025 and its commercial platform has been discontinued, but the open-source tools (whylogs, langkit) remain available. A fit when self-hosted statistical drift detection on data without raw-content logging is the constraint.

- Helicone — LLM logging and observability with a lightweight proxy-based capture model. A fit when the priority is fast time-to-value for OpenAI/Anthropic API logging without OpenTelemetry instrumentation.

- Langfuse — Open-source LLM engineering platform with tracing, prompt management, and evaluations. A fit when self-hosting and source-available licensing matter more than the OpenTelemetry-native tracing model Arize emphasizes.

For LLM security rather than observability — prompt injection detection, guardrails, and runtime protection — consider Lakera Guard , Prompt Security , LLM Guard , or NeMo Guardrails .

For pre-deployment vulnerability scanning, see Garak , Augustus , or Promptfoo .

For a broader overview of AI security tools, see the AI security tools category page.