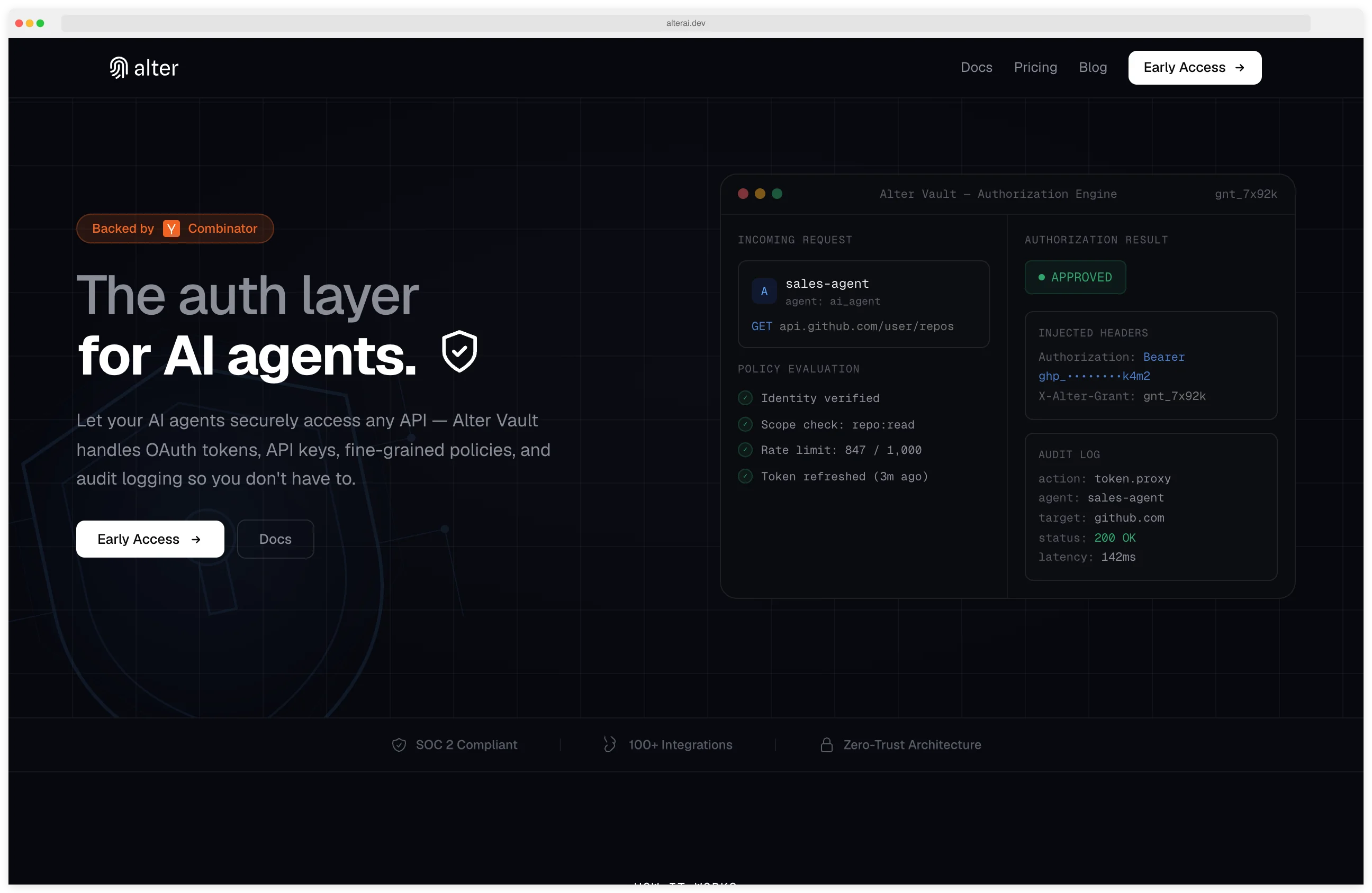

Alter is a zero-trust identity and access control platform purpose-built for AI agents, verifying every tool call with fine-grained RBAC/ABAC authorization and ephemeral credentials that expire in seconds.

While broader platforms like Onyx Security or Noma Security address full AI governance, Alter specializes in the identity and access control layer where unauthorized agent actions cause the most damage.

The company was founded by Srikar Dandamuraju (CEO) and Kevan Dodhia (CTO) and is backed by Y Combinator (S25 batch). Before Alter, Dandamuraju was a Platform Lead at Goldman Sachs, where he scaled post-trade infrastructure and helped launch the GM Card.

Dodhia was the technical co-founder of ComputeAI, where he built a compute engine 5x faster than EMR Spark and sold into regulated enterprises like the London Stock Exchange Group. ComputeAI was acquired by Terizza in 2025. Dodhia is a Carnegie Mellon graduate (2019).

Their shared experience building mission-critical infrastructure at Goldman Sachs and for the London Stock Exchange informs Alter’s approach: treat every AI agent interaction with the same rigor applied to financial transactions.

What is Alter?

Alter sits between AI agents and the tools they call, acting as an authentication and authorization layer. Every request is verified at the parameter level, authorized against granular policies, executed with least-privilege access, and fully audited in real time.

The platform eliminates long-lived API keys — a common vulnerability in agent workflows — by issuing ephemeral, scope-narrowed tokens that expire in seconds. Agents receive only the minimum access needed for a specific task, and credentials are rotated or revoked automatically after use.

What are Alter’s key features?

| Feature | Details |

|---|---|

| Access Control | Fine-grained RBAC (Role-Based) and ABAC (Attribute-Based) policies |

| Verification | Parameter-level checks on every tool call |

| Credentials | Ephemeral, scope-narrowed tokens with seconds-lived expiration |

| Blocking | Pre-execution blocking of dangerous operations (DROP TABLE, excessive payments, etc.) |

| Audit | Complete request/response logging with CISO-ready dashboard |

| Compliance | SOC 2, HIPAA, GDPR audit readiness |

| Tool Support | MCP (Model Context Protocol) and native tool integrations |

| A2A | Agent-to-Agent connections coming soon |

| Red Teaming | Partnership with former OpenAI cybersecurity experts for ongoing vulnerability testing |

| Identity | Cryptographic identity verification for each agent interaction |

How zero-trust works for agents

Traditional API security uses long-lived keys that grant broad access. In agentic AI workflows, this creates cascading risk: a compromised agent with a persistent API key can access everything that key permits, indefinitely.

Alter replaces this model with zero-trust principles adapted for agent workflows:

Identity verification — Each agent request starts with cryptographic identity verification. The platform confirms the agent’s identity before processing any action.

Policy evaluation — The request is evaluated against RBAC and ABAC policies at the parameter level. A policy might allow an agent to read customer records but block writes, or permit payments up to a threshold.

Credential issuance — If authorized, Alter issues an ephemeral token scoped to exactly the permissions needed for this specific action. The token expires in seconds.

Execution and audit — The action executes with least-privilege access. The full request and response are logged for compliance and forensic analysis.

Credential revocation — After execution, the credential is automatically revoked. There are no persistent tokens to leak or misuse.

Red teaming partnership

Alter partners with former OpenAI cybersecurity experts who provide ongoing red teaming of agent workflows. This testing covers prompt injection attacks that attempt to escalate agent privileges, data exfiltration through tool calls, and other exploits specific to agentic AI systems.

The red teaming results feed back into Alter’s policy engine, helping identify new attack patterns and strengthen default protections.

Alter was accepted into Y Combinator’s Summer 2025 batch. The founders bring experience from Goldman Sachs and ComputeAI, building mission-critical infrastructure for financial institutions.

Note: Alter’s product now lives at alterauth.com — the original alter.ai domain is no longer used by the company.

How do I get started with Alter?

When to use Alter

Ideal for teams deploying AI agents that interact with sensitive systems — databases, payment processors, internal APIs — where unauthorized actions could cause real damage. The parameter-level policy enforcement matters most in regulated industries where compliance requires demonstrating least-privilege access and complete audit trails.

The platform complements broader AI security tools rather than replacing them. It handles the identity and access control layer while other tools cover vulnerability scanning, prompt filtering, or agent governance.

What are alternatives to Alter?

Alter is narrowly focused on identity and access control for agents. The closest alternatives split by overlap layer:

- Cerbos — Open-source authorization engine that handles policy-as-code for agent and human actors alike. A fit when you want the authorization layer self-hosted and source-available, complementing rather than replacing Alter at the policy-enforcement layer.

- Onyx Security — Broader AI governance covering discovery, posture, and policy. A fit when the requirement is full-stack AI governance rather than the access-control sliver Alter specializes in.

- Lasso Security — Commercial counterpart on the runtime LLM security side, with overlap on agent-tool call inspection. A fit when prompt-traffic inspection is part of the requirement, not just authorization.

- Noma Security — Broader AI security platform covering discovery, runtime protection, and governance. A fit when you need a single vendor across the AI lifecycle rather than a focused identity layer.

How Alter compares to API gateways

Traditional API gateways — Kong, Apigee, AWS API Gateway — were designed for human-issued API keys and OAuth flows between services. They authenticate, throttle, and route traffic, but their identity model assumes long-lived credentials owned by humans or stable services.

Agent traffic breaks that model in two ways. First, agent identities shift faster than human identities: a single workflow may spin up dozens of short-lived agents, each needing scoped permissions for a specific tool call. Second, the relevant authorization decision is at the parameter level — transfer($amount, $account) is allowed at $100 but not $100,000 — which API gateways cannot evaluate without per-route logic.

Alter operates one layer up: it inspects the tool call payload, evaluates RBAC and ABAC policies against the actual parameters, and only then issues a scope-narrowed token that the API gateway sees as a normal credential. The two layers compose rather than replace each other.

For more AI security tools and guidance, see the AI security tools category page. For enterprise AI governance platforms, see Onyx Security or Noma Security .

For runtime prompt protection, consider Lakera Guard or LLM Guard . For LLM vulnerability scanning, look at Garak or Promptfoo . For protocol-layer zero trust, check Xage Security .