Vibe coding is building software by describing what you want to an AI assistant and shipping whatever comes out, as long as it runs. The term was coined by Andrej Karpathy in February 2025, and it has grown from a meme into a genuine development philosophy embraced by millions of builders, many of whom have no programming background.

The security problem is not the quality of AI-generated code. It is that vibe coding has no human review step.

Traditional security tools catch vulnerabilities in code that developers can read and understand.

Vibe coding creates a class of builders who cannot read the code at all, so those tools are never applied.

This page covers the unique security risks of vibe coding as a cultural movement. For a comprehensive technical guide on testing and securing AI-generated code with SAST tools, CI/CD policies, and vulnerability data, see AI-Generated Code Security.

What is vibe coding?

Vibe coding is building software through conversation with an AI coding assistant. You describe what you want in plain English.

The AI writes the code. You run it.

If it works, you keep going. If it breaks, you describe the problem and the AI tries again.

You do not read the code. You do not need to understand it.

Andrej Karpathy defined it in a February 2025 post on X: “I just see stuff, say stuff, run stuff, and copy paste stuff, and it mostly works.” He added a key phrase that distinguishes vibe coding from traditional AI-assisted development: “forget that the code even exists.”

That last part is what makes vibe coding different from using GitHub Copilot or asking ChatGPT for a code snippet. Those are tools that help developers write code.

Vibe coding is a philosophy that says you do not need to write or even read code at all.

The tools driving the movement

Vibe coding took off because the tooling matured fast. Cursor, Windsurf, Replit Agent, Bolt.new, and Lovable let users go from a text description to a running application in minutes.

Claude Code, GitHub Copilot, and Amazon Q Developer integrate directly into editors.

As of early 2026, roughly 85% of professional developers use AI coding tools in some capacity, and a growing number of non-developers use them to build entire products.

These tools are not just autocomplete anymore. They scaffold full projects, configure databases, set up deployment pipelines, and generate entire backends from a single prompt.

The gap between “I have an idea” and “I have a running app” has collapsed.

Vibe coding versus AI-assisted development

The distinction matters for security. AI-assisted development means a developer uses AI to write code faster, but still reads, understands, and reviews everything before committing. The developer remains the quality gate.

Vibe coding removes that gate. The builder evaluates output by running it, not by reading it.

If the app loads, the login works, and the dashboard renders, it ships.

Nobody checks whether the database queries are parameterized, whether the API endpoints validate input, or whether the session tokens are cryptographically random.

Who is vibe coding?

Vibe coding is no longer confined to professional developers. It refers to anyone who uses AI coding tools to build and ship software without reviewing the generated output — including non-technical founders, designers, product managers, and students who have never written code before.

This expansion of who is building software is the development that most changes the security threat model.

Non-technical builders

People who never learned to code are now building and deploying production applications. Startup founders prototype their MVPs in a weekend with Cursor.

Designers build interactive portfolios with Bolt.new. Product managers spin up internal tools with Replit Agent. Marketing teams generate landing pages with dynamic backends.

These builders have no security training. They do not know what SQL injection is.

They have never heard of OWASP. They cannot distinguish a parameterized query from string concatenation.

And the tools they are using do not teach them, because the tools are optimized for “it works,” not “it is secure.”

Solo developers shipping fast

Professional developers vibe code too, often on side projects, hackathons, and MVPs where speed matters more than polish.

The reasoning is understandable: “I will clean it up later.” But “later” rarely arrives, and the MVP that was supposed to be temporary becomes the production codebase. Side projects grow users.

Hackathon demos become startups. The insecure code that “worked” in demo mode is now handling real user data.

Students and learners

Computer science students and coding bootcamp graduates are increasingly learning to code with AI from day one. Some never develop the instinct to question generated code.

When the AI writes a login function, they do not think to ask whether it rate-limits failed attempts or hashes passwords correctly.

They have not yet learned what “correctly” means in a security context.

Why vibe coding is a different security problem

Vibe coding is a different security problem because it is a cultural problem, not a technical one. AI-generated code security has technical solutions — SAST scanners, SCA tools, and CI/CD pipelines handle it well.

But those tools only work if someone applies them.

Vibe coding creates a builder population that lacks the knowledge to apply any security tooling, operating entirely outside the organizational structures that would otherwise enforce it.

The code review step disappears

In traditional development, code review is a natural checkpoint. Even sloppy teams look at diffs before merging.

Vibe coding eliminates this step. Karpathy’s definition literally says to forget the code exists.

If the builder does not read the code, no one reads the code.

The builder cannot evaluate security

A developer who writes a vulnerable SQL query at least has the knowledge to recognize the vulnerability when it is pointed out. A non-technical vibe coder does not.

If a SAST tool reports “CWE-89: SQL Injection in line 47,” the vibe coder does not know what that means, does not know how to fix it, and may not even know how to configure the scanner in the first place.

Speed overrides caution

Vibe coding culture celebrates speed. “I built this in 2 hours” is a badge of honor on X and YouTube.

“I spent 3 days on security review” is not. The incentives favor shipping fast and dealing with consequences later.

For a solo builder with no users, that is a personal risk. For a startup handling customer data, it is a liability.

No organizational guardrails

Enterprise developers write AI-generated code inside environments that have security policies, required CI checks, code review mandates, and security teams. Vibe coders — especially non-technical ones — build outside those guardrails.

They deploy from personal laptops to Vercel, Netlify, or Railway with zero security infrastructure between their prompt and production.

What security risks does vibe coding create?

The vulnerability patterns in AI-generated code are well-documented — hardcoded secrets, SQL injection, missing input validation, broken authentication. See AI-Generated Code Security for the full breakdown with research data.

The risks below are specific to the vibe coding approach: not just that vulnerabilities exist, but that no one with the ability to find or fix them is in the loop.

Building what you cannot audit

When you vibe code a full-stack application, the AI generates thousands of lines across frontend, backend, database, and configuration. Even if you wanted to review it, the volume makes meaningful review impractical.

You end up with a working application that you treat as a black box — the same way you treat a SaaS product.

The difference is that SaaS products have security teams, and your vibe-coded app does not.

Authentication as an afterthought

Vibe coders build features first and think about security later, if at all. Authentication is the clearest example.

The typical vibe coding session goes: “Build me a task manager app.” Then: “Add user accounts.”

The AI generates a login form and basic session handling. The builder tests it — login works, sessions persist, great.

Nobody checks whether the session tokens are predictable, the password reset flow is safe, or the API endpoints validate authorization.

Authentication code is the highest-risk code in any application, and it is the code most likely to be vibe-coded without review.

The dependency blind spot

When you vibe code, the AI chooses your dependencies. It picks npm packages, Python libraries, and Go modules based on patterns in training data.

The builder has no opinion on which packages are used because they often do not know what a package manager is.

If the AI installs a package with known CVEs, or pulls in a dependency that was last updated in 2022, no one notices.

In a professional development workflow, SCA tools catch this. In a vibe coding workflow deployed from a personal laptop, there is no SCA step.

Deployment without infrastructure

Tools like Vercel, Netlify, Railway, and Render make deployment trivially easy. A vibe coder can go from prompt to production URL in under an hour.

But none of these platforms run security scans by default. The deployment pipeline is: code exists locally, push to GitHub, auto-deploy to production.

No SAST, no SCA, no DAST, no review. The fastest path from idea to production is also the path with zero security checkpoints.

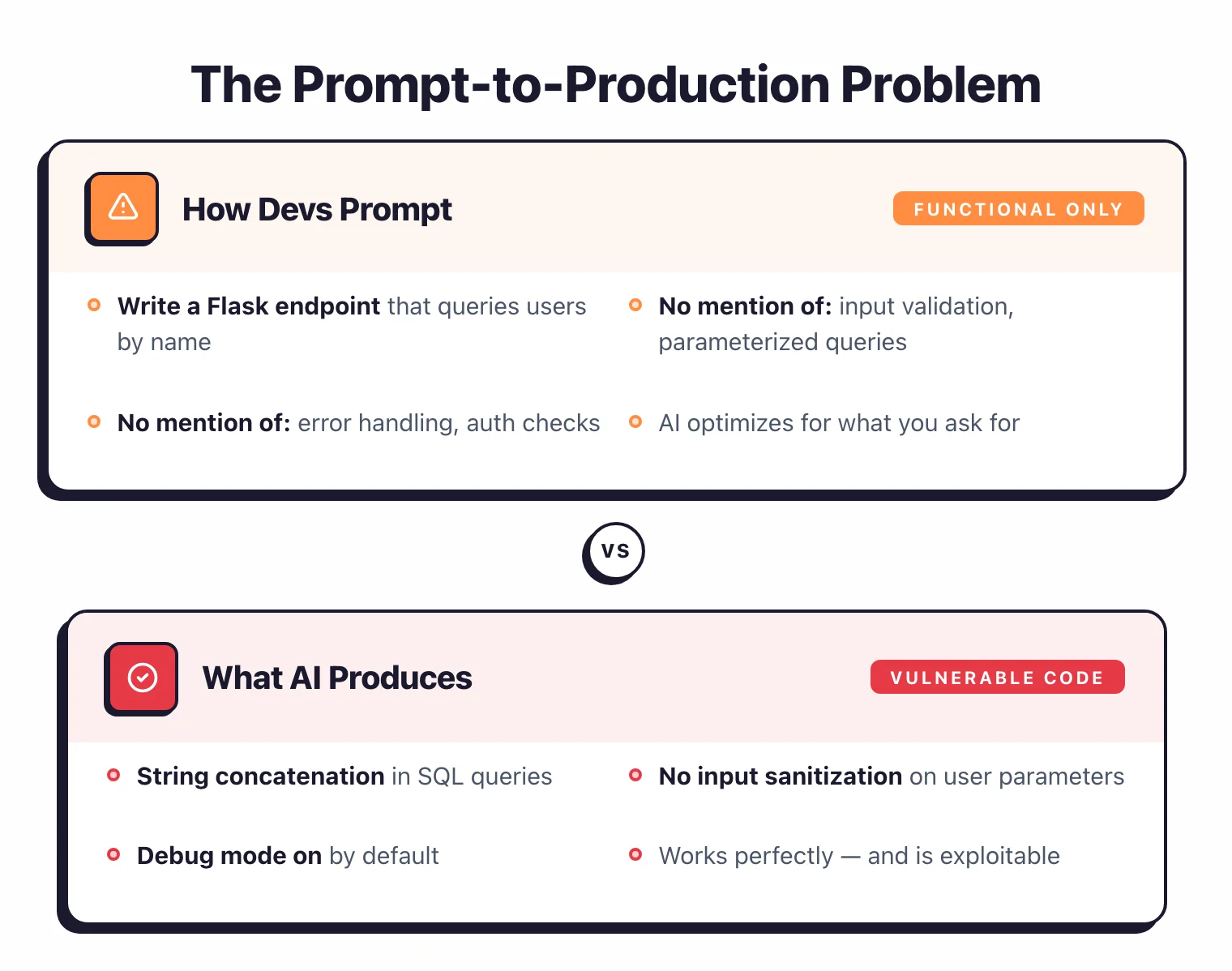

The prompt-to-production problem

The prompt-to-production problem refers to the compression of the entire software development lifecycle into a single conversation with an AI — where the traditional checkpoints that surface security issues (code review, automated testing, staging environments) never exist.

The problem with vibe coding is not AI code quality.

AI-generated code has known vulnerability patterns, and existing security tools handle them effectively. The problem is that security review is not part of the conversation.

Traditional timeline versus vibe coding timeline

In a traditional team, a feature goes through: requirements, design, implementation, code review, automated testing, staging, production. Each step is a potential checkpoint where security issues surface.

The timeline is days or weeks.

In vibe coding, the timeline is: prompt, run, deploy. Minutes or hours.

The checkpoints that exist in traditional development are not removed deliberately — they simply never existed in the first place. The vibe coder is not skipping code review.

They are building in a workflow where code review was never part of the picture.

The “it works” standard

Vibe coding uses a single quality metric: does it work? If the app loads, the buttons click, and the data saves, the code is done.

This is a functional test, not a security test. An application can be fully functional and fully vulnerable at the same time.

The problem is that security failures are invisible during normal use. SQL injection does not manifest until someone sends a malicious query.

Broken authentication does not manifest until someone guesses a session token.

Missing rate limiting does not manifest until someone brute-forces the login endpoint.

The vibe coder never encounters these failures because they only test the happy path.

What happens when vibe-coded apps reach production?

When vibe-coded applications reach production without security review, they tend to fail in predictable patterns. The common thread is the same in every case: the builder cannot read the code, so vulnerabilities that would be obvious to a developer go undetected until real users are affected.

The MVP that got users

A non-technical founder vibe codes an MVP in a weekend. It works.

They post it on Product Hunt. It gets 500 signups.

Now the app handles real user emails, passwords, and potentially payment data. The codebase was never reviewed.

There is no security scanning. There are no backups.

The founder does not know how to add any of these things because they did not write the code and cannot read it.

The internal tool

A product manager builds an internal tool with Cursor to track customer feedback. It connects to the company database.

The tool works great. It also has a SQL injection vulnerability that gives anyone with the URL access to the entire customer database.

Internal tools built outside the engineering team’s security controls are a growing attack surface.

The side project that scaled

A developer vibe codes a browser extension over a weekend. It gets popular.

Suddenly it has 10,000 users and handles their browsing data. The extension code was generated by AI, accepted without review, and published to the Chrome Web Store.

The developer did not think about data handling because it was “just a side project.”

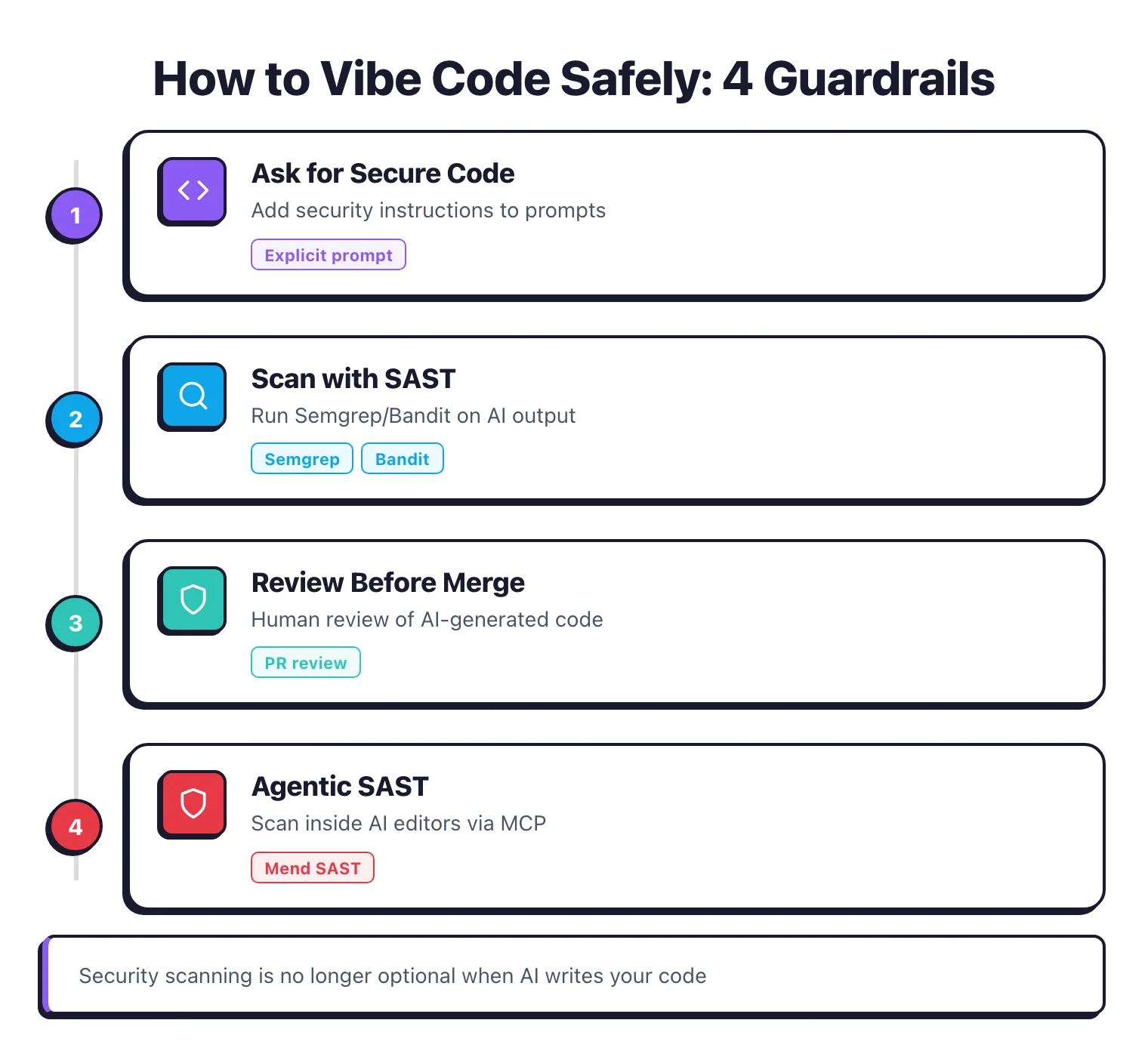

How to vibe code safely

Vibe coding does not have to be insecure, but safety does not happen by default. You have to add it deliberately.

If you are a non-technical builder

You cannot secure what you cannot read. If your application handles user data, payments, or sensitive information, you need someone who can read the code to review it before it goes to production.

Hire a freelance developer for a security-focused code review. It costs a few hundred dollars and takes a day.

For security-critical functions, use managed services rather than letting the AI build them from scratch. Supabase includes row-level security.

Auth0 and Clerk handle authentication. Stripe handles payments.

These services exist precisely so that generated code does not have to get authentication right.

If you add one security measure, make it a secrets scanner. Install Gitleaks as a pre-commit hook.

It blocks hardcoded API keys and passwords from reaching your repository.

If you are a developer vibe coding on the side

You already know about SAST and SCA scanning. Add Semgrep CE and Trivy to your side project’s CI pipeline.

It takes 15 minutes and catches the majority of AI-introduced vulnerabilities. For detailed setup guidance and tool comparisons, see AI-Generated Code Security.

You do not have to read every line the AI generates. Focus on authentication, authorization, database queries, file handling, and API endpoints. That is where AI-generated vulnerabilities concentrate.

Do not deploy directly from your laptop. Set up a minimal CI/CD pipeline with automated security scanning.

GitHub Actions with Semgrep takes 10 minutes to configure and creates a checkpoint between your code and production.

If you manage a team where people vibe code

Developers on your team are vibe coding whether or not it is in the official workflow. Product managers are building internal tools with Cursor.

Designers are prototyping with Bolt.new. Pretending otherwise does not make it secure.

Bans rarely work. Instead, offer a pre-configured project template with CI/CD security scanning already set up.

Include Gitleaks pre-commit hooks, Semgrep in CI, and Trivy for dependency scanning.

Make it easier to build securely than insecurely.

The one hard line: anything that handles user data, authentication, or payments must pass a security review before production.

Side projects, prototypes, and internal dashboards displaying non-sensitive data can be vibe coded freely.

Everything else needs a human who can read the code.

For enterprise-level policies on AI coding tools, SAST integration, and organizational controls, see AI-Generated Code Security.